AI Visibility Gap Analysis with Free Tools: A Step-by-Step Guide

Google says you exist. ChatGPT disagrees. That gap, invisible in your analytics, is where deals are starting to go.

According to Erlin's 2026 State of AI Search report, 67% of marketing leaders have no way to measure how their brand appears in AI-generated answers.

At the same time, AI search traffic converts at 3–6x the rate of traditional channels.

So this isn't just a branding concern: the gap has a direct cost.

This guide walks through how to find your AI visibility gaps using free tools, what to look for at each step, and when it makes sense to move to a platform like Erlin that connects the analysis to actual fixes.

What is AI Visibility, and Why Does the Gap Exist?

AI visibility is how often and accurately your brand appears when an AI system answers a question relevant to your business. It's less like an SEO ranking and more like a yes/no question per prompt: either you're in the answer, or you're not.

When someone types "best CRM for managing a mid-sized team" into Perplexity, that AI doesn't return ten blue links. It names three to five brands in a paragraph. If you're not one of them, you don't exist for that user at that moment, regardless of where you rank on Google.

This is where most brands are confused about why the gap exists. They assume good Google rankings should translate. They don't. Google rewards keywords, backlinks, and authority.

AI systems evaluate something different: fact density, how easily your content can be extracted and cited, third-party validation signals, and how recently your information was updated. A brand can sit at position one in organic search and still not appear in ChatGPT's answer to the same question.

Erlin's analysis of 500+ brands found that brands implementing structured data, such as comparison tables, FAQ schema, llm.txt files, etc., saw 28–34% higher AI coverage within two to three weeks. The gap exists because most brands haven't built for AI retrieval. They've built for Google.

How to Understand the AI Visibility Gap

Before jumping into how to find your gaps, it helps to understand what you're actually measuring.

Mention rate is how often your brand name appears in AI responses to relevant prompts.

Citation rate is more specific: it measures how often AI responses link back to your website as a source. Perplexity shows citations explicitly (numbered links in every response). ChatGPT does it selectively, mainly when it pulls from live web results.

Research shows ChatGPT mentions brands roughly 3x more often than it actually links to them. Tracking only citations gives you an incomplete picture. You want both.

Share of voice compares your mention rate against competitors across the same set of prompts. That's what actually tells you whether you have a gap, not your mention count in isolation, but how it stacks up.

The Erlin 2026 report found that AI typically cites only 2–3 brands per query. It's a winner-take-most situation. If you're in the answer, you capture nearly all of that user's attention. If you're not, you're invisible for that query regardless of how well you rank elsewhere.

How to Find AI Visibility Gaps Using Free Tools

Run a Manual AI Search Audit

A manual audit takes about an hour and gives you a real baseline. It won't scale, but it tells you where you stand right now.

Step 1: Build your prompt list

Write 15–20 questions that a buyer at your stage of the funnel would actually ask. The goal is to mirror real research behavior, not test whether AI knows your brand name.

Good prompts look like:

"What are the best [your category] tools for [use case]?"

"How do I [solve the problem your product solves]?"

"What should I look for in a [your product category]?"

"[Your product type] vs [competitor product type]—which is better?"

"Does [specific feature your brand offers] make a difference?"

Avoid prompts like "Tell me about [your brand name]." Those test brand awareness, not buyer intent. The real question is whether you show up when buyers are comparing options, not whether AI knows you exist.

Step 2: Run the audit in ChatGPT

Go to chat.openai.com. Use the default GPT-4o model. For each prompt:

Paste your question exactly as written

Screenshot or paste the full response into a tracking doc

Note: Was your brand mentioned? Was it cited with a link? What position were you (first, second, mentioned in passing)?

Note: Which competitors were named?

Run all 15–20 prompts. Don't regenerate if you don't like the answer. AI responses vary, and you're documenting what a first-time user would see.

Step 3: Run the same prompts in Perplexity

Perplexity is particularly useful for this audit because it shows its sources. Go to perplexity.ai (no account needed for basic testing). Run your same prompts and document the same thing. Plus one extra step: note which URLs appear in the citation list.

Those URLs tell you which sites AI trusts for your category. If a competitor's blog is cited repeatedly, you know where to focus your third-party coverage efforts.

Step 4: Document what you find

Use a simple spreadsheet. Columns: prompt, ChatGPT result (yes/no/mentioned briefly), Perplexity result, competitors mentioned, sources cited, notes.

When you're done, look for patterns:

Which prompts return your brand consistently? That's where you're already winning.

Which prompts mention competitors but not you? Those are your clearest gaps.

Which third-party sources keep appearing in Perplexity's citation list? Those are the sites you need coverage on.

A brand showing up in fewer than 30% of relevant prompts has meaningful AI visibility gaps. Top brands in competitive categories often hit 30% or higher after optimization.

Use Perplexity to Find Visibility Gaps

Perplexity deserves its own section because it's arguably the most useful free tool for this kind of analysis. Unlike ChatGPT, it shows you exactly where it got its information.

Every Perplexity response includes numbered citations: actual URLs. This transparency lets you reverse-engineer who's winning in your category and why.

Here's how to use it specifically for gap analysis:

Search for your category, not your brand. Use queries like:

"Best tools for [your category] in 2026"

"Compare [your category] options for [specific use case]"

"What do users say about [competitor name]?"

For each result, document the sources. Run 10–15 category queries and tally which domains appear most often. The sites that show up repeatedly are the ones AI trusts for your topic area.

If your website doesn't appear, but three competitors' blogs do, along with a G2 review page, a Reddit thread, and a Wikipedia article, you've just mapped your gap. You need coverage on those platforms, not just better SEO on your own site.

One more thing worth testing in Perplexity: search your brand name directly. What does AI say about you? Is the description accurate? Is it outdated?

Erlin's research found that monitored brands catch content accuracy errors in about 14 days versus 67 days for unmonitored brands. The longer a gap goes undetected, the longer competitors are filling that space.

Why Manual AI Overview Gap Analysis Doesn't Scale

The manual audit above is genuinely useful, but it has real limits worth being upfront about.

Responses vary

AI outputs aren't static. Run the same ChatGPT prompt twice, and you may get different brands in the answer. The results change based on model version, browsing status, and factors you can't see. A one-time audit is a snapshot of one moment, not a trend.

The volume problem

A serious gap analysis requires testing dozens to hundreds of prompts, across multiple platforms (ChatGPT, Perplexity, Claude, Gemini, Google AI Overviews), against a competitor set. That's hundreds of manual queries per week. Most marketing teams can't sustain that.

You can't track movement

Knowing you appeared in 4 out of 10 prompts last Tuesday tells you almost nothing. Knowing you went from 4/10 to 8/10 over six weeks, and which content changes drove that, is actionable. Manual audits can't give you that without enormous effort.

Attribution is missing

Manual audits tell you whether you appear in AI answers. They don't connect those appearances to website traffic, and they can't tell you whether AI-referred visitors are converting. AI traffic from ChatGPT and Perplexity often lands in Google Analytics as Direct or Referral, making it invisible without proper integration.

How to Find Your Brand Gaps in AI Search at Scale

Once you've run the manual audit and have a sense of where your gaps are, the next step is setting up ongoing tracking. Before diving into the full setup, you can start with Erlin's free audit:

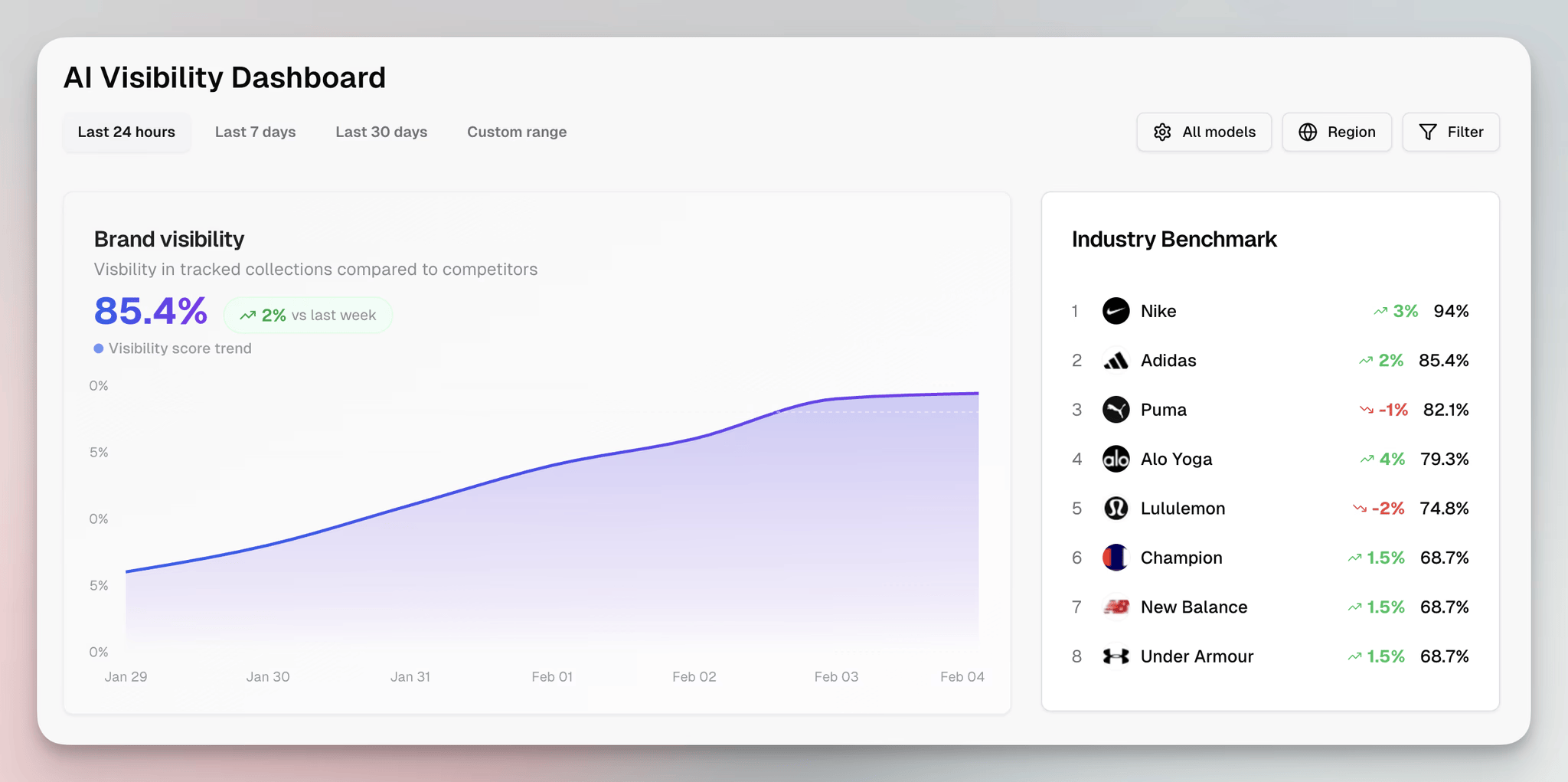

It automatically runs prompt tracking, shows your AI Visibility Rank, and benchmarks your results against competitors, giving you a structured baseline from the start.

For continuous monitoring, Erlin is built specifically for this. It connects visibility monitoring to content action rather than just surfacing data. Here's how the setup works:

Step 1: Sign up and enter your domain

Go to app.erlin.ai/get-started. Once you enter your domain, Erlin automatically captures brand context, pulls social signals, and prepares baseline insights. You don't start from scratch.

Step 2: Select competitors

Erlin suggests up to five competitors based on your domain and category. You can accept, remove, or add manually. Your AI visibility score means more when read against competitors than as a standalone number, which is why the competitive baseline matters from day one.

Step 3: Choose prompts to track

Erlin suggests high-intent prompts relevant to your category. These are the queries AI platforms actually process when buyers are in research mode. You're not guessing what to track; the system informs the prompt library.

Step 4: View your initial snapshot

After setup, you get an instant baseline: your AI Visibility Rank, Traffic Rank, and competitor comparison. This is your starting point and the number you measure all future changes against.

Step 5: Connect Google Analytics and Search Console

This step is what separates monitoring from measurement. Linking GA and GSC unlocks AI vs. non-AI traffic breakdowns, conversion rate comparisons, and AI source distribution by platform. This is where the difference between AI traffic and everything else becomes visible for your brand specifically.

Step 6: Explore the Prompts section

Under AI Visibility → Prompts, you see visibility trends and competitor comparisons at the individual prompt level. The Answer History tab shows actual text from recent ChatGPT or Perplexity responses: whether your brand was mentioned, whether it was cited, and what sources were referenced. This is the gap analysis, not just a score, but the specific prompts where competitors are showing up and you're not.

Real-World AI Visibility Gap Analysis Examples

How iRESTORE grew AI traffic 6.5x in 90 days

iRESTORE made laser hair growth devices and performed well in traditional SEO. The problem: buyers were asking ChatGPT questions like "best laser hair growth device" and "does X actually work for hair loss?", and iRESTORE wasn't in the answers.

They had no way to measure how often the brand appeared, no breakdown by AI platform, and no execution loop to convert gaps into fixes.

Using Erlin, they tracked 15 high-intent prompts daily across four platforms and found that ChatGPT drove 94% of their AI referrals. They concentrated optimization there.

They also restructured content for AI extraction: clear definitions, step-by-step explanations, and structured data that reduced ambiguity for AI systems.

The result: AI traffic grew 6.5x in 90 days. Conversion rate was 3x the site average because users arriving from AI recommendations were already in decision mode.

How Jify.co fixed a measurement problem before an optimization problem

Jify.co had consistent inbound demand but a distorted picture from third-party SEO tools. External estimates suggested minimal organic reach. First-party data told a different story.

By connecting Google Analytics and Search Console through Erlin, they replaced estimated metrics with verified data. They found that Perplexity and ChatGPT were splitting AI referral traffic roughly evenly, which suggested a research-oriented audience using citation-based AI for verification, not just discovery.

The real insight wasn't about growing traffic. It was about understanding traffic that already existed, and adjusting content strategy based on what their audience was actually doing.

Common AI Visibility Gaps (and How to Fix Them)

AI Visibility Gap | Why It Happens | How to Fix It |

Brand not mentioned in category queries | Low fact density; AI can't confidently extract what you do | Add structured FAQ pages, comparison tables, and explicit feature/use-case descriptions |

Mentioned but not cited | Content exists, but isn't in machine-readable formats | Implement llm.txt, FAQ schema markup, and static HTML for key pages |

Competitors mentioned more often | Stronger third-party validation signals | Build presence on Reddit, G2, review platforms; pursue editorial mentions |

Outdated information in AI answers | Content hasn't been refreshed; AI confidence scores decay | Update cornerstone pages monthly; monitor for stale citations |

Correct info on your site, wrong info in AI | AI pulling from third-party sources that haven't been updated | Audit and correct G2, Crunchbase, LinkedIn, directory profiles |

Visible in ChatGPT but not Perplexity | Different source indexing across platforms | Build content that appears in sources Perplexity relies on (recent editorial, Reddit) |

No AI traffic showing in Analytics | AI referrals miscategorized as Direct or Referral | Connect GA4 and GSC to properly attribute and segment AI traffic |

Frequently Asked Questions

What is an AI visibility gap?

An AI visibility gap is the difference between how often your brand appears in AI-generated answers and how often it should appear given your relevance to the queries being asked. If buyers are asking ChatGPT to compare tools in your category and your brand isn't mentioned, that's a gap, regardless of how well you rank in Google.

How do I check if my brand appears in ChatGPT or Perplexity?

Run 15–20 buyer-intent queries in ChatGPT (chat.openai.com) and Perplexity (perplexity.ai). Use questions like "best [your product category] for [use case]" rather than your brand name directly. Document which competitors appear and which sources get cited. This takes about an hour and gives you a functional baseline.

Does ranking well in Google guarantee AI visibility?

No. Erlin tracked 500+ brands and found only a weak correlation between traditional SEO rankings and AI citation rates. AI systems weigh entity clarity, content freshness, structured data, and third-party validation more than keyword density or backlink volume. Brands should treat AI visibility as a separate channel.

What's the fastest way to improve AI visibility?

Structured data has the fastest measurable impact. Comparison tables, llm.txt files, and FAQ schema markup are associated with 28–34% higher AI coverage within 14–21 days of implementation, according to Erlin's 2026 research. Third-party coverage (Reddit, review platforms, editorial) drives 2.6–3.4x higher citation rates than owned content alone.

How often should I audit AI visibility?

AI responses change. A one-time audit tells you where you stand today, not whether you're improving. Meaningful measurement requires tracking the same prompts consistently over time. Weekly or monthly tracking against a fixed prompt set is the minimum for brands that want to see trends.

How do I track which AI platforms are sending me traffic?

AI referrals from ChatGPT and Perplexity often land in Google Analytics as Direct or Referral traffic. To properly identify and segment AI traffic, you need either UTM-level attribution or a tool that integrates directly with GA4 and Search Console. Erlin's GA/GSC integration separates AI traffic from other channels and breaks it down by platform.

Can smaller brands compete with larger ones in AI search?

Yes, and this is one of the more interesting findings from Erlin's data. Smaller brands with strong entity context and structured data regularly outperform larger competitors in specific query categories. AI doesn't default to the biggest brand. It cites the clearest, most well-documented one. That's actually an opportunity for focused brands that do the work.

How to Get Started Today

The manual audit described here is the right starting point if you've never done this before. Run your 15-20 prompts in ChatGPT and Perplexity, document what you find, and look for the pattern of who's getting cited instead of you.

If you find meaningful gaps (most brands do), the next question is whether you want to track and close them systematically or continue with periodic manual checks. Manual audits are fine for a one-time baseline. They don't work for ongoing optimization.

Erlin's free assessment at app.erlin.ai/get-started gives you an instant snapshot of your AI Visibility Rank and competitor positioning without requiring manual query-running.

It's a faster way to see where you actually stand and a starting point for building the tracking that turns gap analysis into growth. The brands that close their AI visibility gaps now will compound an advantage that gets harder to replicate over time.

Get Your Free Audit Now → app.erlin.ai/get-started

Share

Related Posts

ChatGPT Prompts for Ecommerce: The Operator's Playbook

Ready-to-use ChatGPT prompts for ecommerce: product copy, email flows, customer service, SEO, and operations. Built on the 4-part prompt anatomy.

12 ChatGPT SEO Hacks to Boost Rankings in 2026

12 actionable ChatGPT SEO hacks with exact prompts. Build topic clusters, generate schema, map intent, and optimize for AI search in 2026

ChatGPT for Retail: Complete Guide for Retail Professionals

How retail professionals use ChatGPT for product discovery, marketing, customer service, and inventory, plus how to get your products recommended by AI.