AI Visibility Audit: How to Run One Yourself (And When You Need a Tool)

Ranking on Google is no longer the full picture.

AI systems like ChatGPT and Perplexity AI don’t “rank” results the same way. They synthesize, recommend, and filter. And in that process, many strong brands disappear entirely.

An AI visibility audit gives you a clearer signal than traditional SEO metrics. It shows whether AI systems recognize, trust, and recommend your brand, and where you’re losing ground to competitors.

This guide walks you through how to run one yourself, what to track, and when a tool makes more sense than doing it by hand.

What Is an AI Visibility Audit?

An AI visibility audit is the process of finding out how AI search platforms mention, describe, or recommend your brand when users ask questions relevant to your category.

It's different from a standard SEO audit in a few ways. Traditional SEO measures your ranking position for a keyword. AI visibility is binary: you're either in the answer or you're not. There's no "position 7" when ChatGPT answers a question. It picks two or three brands, and everything else disappears.

The audit also looks at quality, not just quantity. Is the AI describing your product accurately? Are outdated pricing or features being surfaced? Is it citing a competitor's comparison page as its source when talking about you? These things matter because users trust what AI tells them, and they usually don't verify it.

Why Bother? The Numbers Behind AI Search

Before walking through the steps, it's worth grounding this in what's actually happening.

According to research cited in Erlin's 2026 State of AI Search report, around 44% of users now consider AI their primary source of information. McKinsey's data puts roughly 55% of users using AI-based search to make purchasing decisions.

Brands that appear in AI answers see conversion rates 3–6x higher than traffic from traditional channels, because people are coming in pre-sold. They asked a question, got a recommendation, and clicked through already convinced.

Meanwhile, 67% of marketing leaders surveyed by Erlin in Q4 2025 said they don't know how to measure AI visibility. 58% said no one on their team owns it.

That's a real gap. And gaps like that tend to reward whoever closes them first.

How to Run an AI Visibility Audit Yourself

You don't need a paid tool to start. A manual audit takes a few hours and gives you a clear baseline.

Here's the process:

Step 1: Define Your Scope

Before you start querying AI platforms, decide what you're measuring. This sounds obvious but skipping it makes the whole thing messy.

You need to nail down:

Which platforms: at minimum, test ChatGPT, Perplexity, and Gemini. Google AI Overviews and Claude are worth including if you have time.

Which entities: your company name, product names, key offerings, and any executives or thought leaders you want to track.

Which query types: more on this in a moment.

Write this down before you start. It becomes your repeatable template for future audits, so results are actually comparable month over month.

Step 2: Build Your Prompt List

This is where most people go wrong. They search their brand name and call it an audit.

Your brand name is not the problem. Nobody types "Acme Corp" into ChatGPT. They type "best project management tool for remote engineering teams" or "CRM for B2B SaaS companies under 50 employees."

Those are purchase-intent prompts. They're the queries that happen right before someone books a demo or starts a free trial. Those are the ones you need to track.

Build a list of 20–30 prompts across three categories:

Awareness prompts: general category questions your buyers ask early in the process. Example: "What should I look for in an email automation tool?"

Consideration prompts: feature comparisons and alternative searches. Example: "What's the best alternative to [competitor]?" or "Which tools are best for [specific use case]?"

Decision prompts: direct comparisons and "best for" queries. Example: "Best [your category] for [your specific buyer profile]."

The more specific, the better. Vague prompts give vague answers. Specific prompts reveal exactly whether you're in the conversation when buyers are ready to choose.

Step 3: Query Each AI Platform and Document the Results

Open ChatGPT, Perplexity, and Gemini. Run each prompt manually. Use a spreadsheet to track:

Date and platform

The exact prompt

Whether your brand was mentioned (yes/no)

Your position in the response (first, second, middle, not mentioned)

Which competitors were cited

What sources the AI referenced

How your brand was described (accurate, outdated, wrong)

Overall sentiment (positive, neutral, negative)

Spend particular attention on the sources column. AI systems don't invent their citations; they pull from somewhere. If a competitor is showing up in answers about your category, it's because they're mentioned in a Reddit thread, a review platform, or a comparison article that the AI found credible.

That's your roadmap.

Step 4: Audit Your Fact Density

AI systems rely on discrete, extractable facts to evaluate brands. They're not reading your about page for vibes; they're looking for specific, verifiable information they can confidently cite.

Run this quick self-check:

Is your pricing publicly accessible without filling out a form?

Are your core features presented in scannable formats like lists, tables, or FAQs?

Is your competitive positioning explicit and specific, not vague marketing language?

Are your claims backed by exact numbers, names, or specifications?

Is operational information, things like setup time, integrations, support options, easy to find?

Erlin's data shows that brands with 9+ structured facts in their content achieve 78% AI coverage, compared to just 9% for brands with 0–2 facts. That's not a small gap.

If most of your website copy is positioning language and benefit statements, that's likely why you're not getting cited. AI rewards facts, not marketing language.

Step 5: Check Your Third-Party Footprint

68% of AI citations come from third-party sources, like Reddit discussions, Wikipedia, review platforms like G2, YouTube videos, and editorial coverage, etc., not from your own website.

Your owned content accounts for roughly 32% of where AI finds information about you. The other 68% is coming from what other people say about you.

So audit that too. Ask yourself:

Do you have verified reviews on G2, Capterra, or industry-specific review platforms?

Has your brand been discussed in Reddit threads or industry forums in the last 6 months?

Is your brand referenced on Wikipedia or in category-level articles?

Have independent blogs, publications, or media outlets mentioned your brand in the past year?

Do third-party YouTube videos or review walkthroughs of your product exist?

If the answer to most of those is no, the gap isn't in your content; it's in your credibility signals. AI uses third-party validation to confirm that a brand is worth citing. Without it, your owned content is fighting with one hand tied behind its back.

Step 6: Assess Your Structured Data

This one is more technical but worth checking. AI systems parse content differently depending on how it's formatted.

Static HTML with schema markup has a 94% AI parsing success rate, according to Erlin's research. JavaScript-rendered content drops to 23%. PDF documents fall to 7%.

If your critical product and pricing information lives inside JavaScript-rendered pages or behind gated flows, AI systems often can't read it. You're essentially invisible to the crawler before the citation stage even begins.

A few things to check:

Do you have an llm.txt file that helps AI crawlers understand what to prioritize?

Do you use FAQ schema markup on relevant pages?

Do you have comparison tables that show your product against competitors with specific attributes?

Is your pricing and feature information available in plain HTML?

Each "no" here is roughly a 6–8% reduction in AI coverage, according to Erlin's structured data research.

Step 7: Check Content Freshness

AI systems continuously re-evaluate sources for accuracy and recency. Content from the last three months achieves an average AI coverage of 48%. Content that's 12–24 months old drops to 23%. Anything over two years old sits at around 18%.

On average, brands lose roughly 1.8% of AI coverage per month when content isn't updated.

Ask yourself: when was your core product page last updated? What about your pricing page? If the answer is "last year," that's probably showing up in your citation rate.

What the Results Actually Tell You

After running through all of this, you'll have data. Here's how to read it.

If you're not mentioned at all: the issue is usually fact density and third-party footprint. You exist, but AI doesn't have enough information to confidently cite you.

If you're mentioned but described inaccurately: a stale page or an outdated third-party source is likely feeding AI wrong information. Find it and correct it.

If you're mentioned but always second or third: your competitors have stronger citation authority, usually through more review coverage, more recent content, or better structured data.

If your traffic rank is strong but AI visibility is weak: pay attention here. This means your current SEO performance is masking the problem. The channel that's replacing SEO is the one you're invisible in.

When to Do This Manually vs. When to Use a Tool

Manual audits are free and give you a real signal. They're the right starting point if you've never done this before and just need to understand where you stand.

The problem is scale. You can test 10–20 prompts manually in a sitting. But AI responses vary: the same prompt returns different answers across sessions, platforms, and dates. To get statistically reliable data, you need to track hundreds of prompts consistently, over time.

That's where dedicated tools earn their place.

You should seriously consider a tool when:

You need to track more than 20–30 prompts regularly

You want to monitor competitor citations over time, not just at a point in time

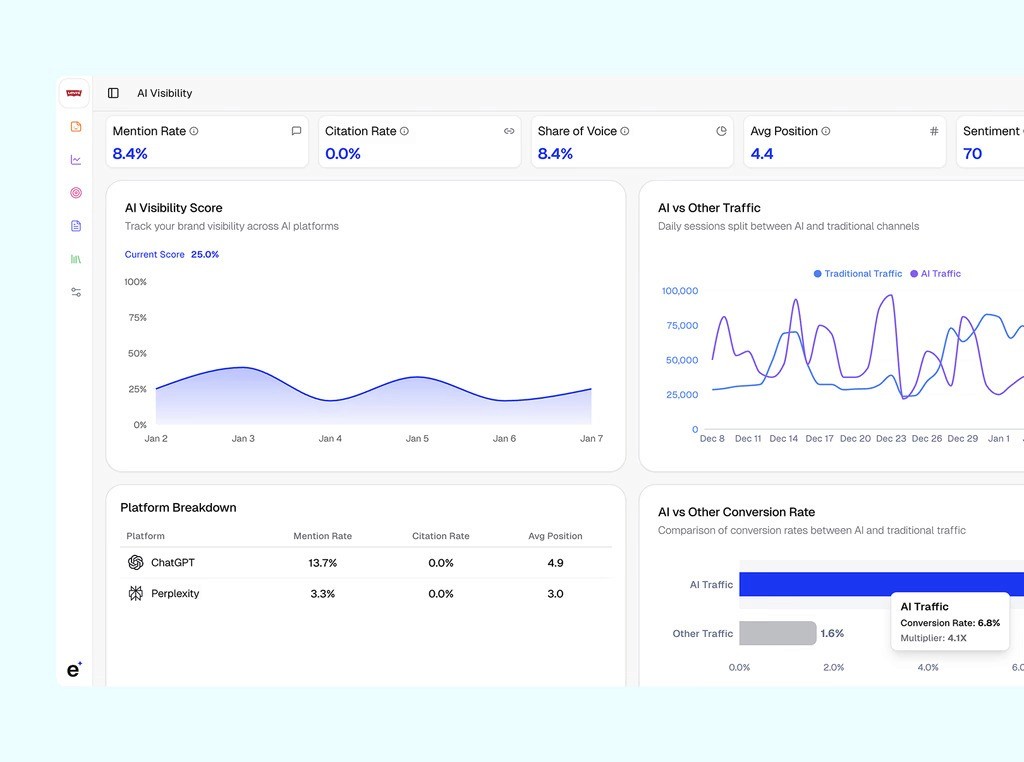

You're trying to correlate AI traffic with actual conversion data

You need to show this data to stakeholders who want a dashboard, not a spreadsheet

You're losing deals to competitors and want to know exactly where the gap is

How to Get a Free AI Visibility Audit with Erlin

If you'd rather start with structured data instead of a spreadsheet, Erlin offers a free AI visibility audit that handles the baseline setup automatically.

Here's how it works, step by step:

Step 1: Sign up and enter your domain: Erlin automatically captures your brand context, pulls in social signals, and generates initial visibility insights. You're not starting from zero; the baseline is built for you.

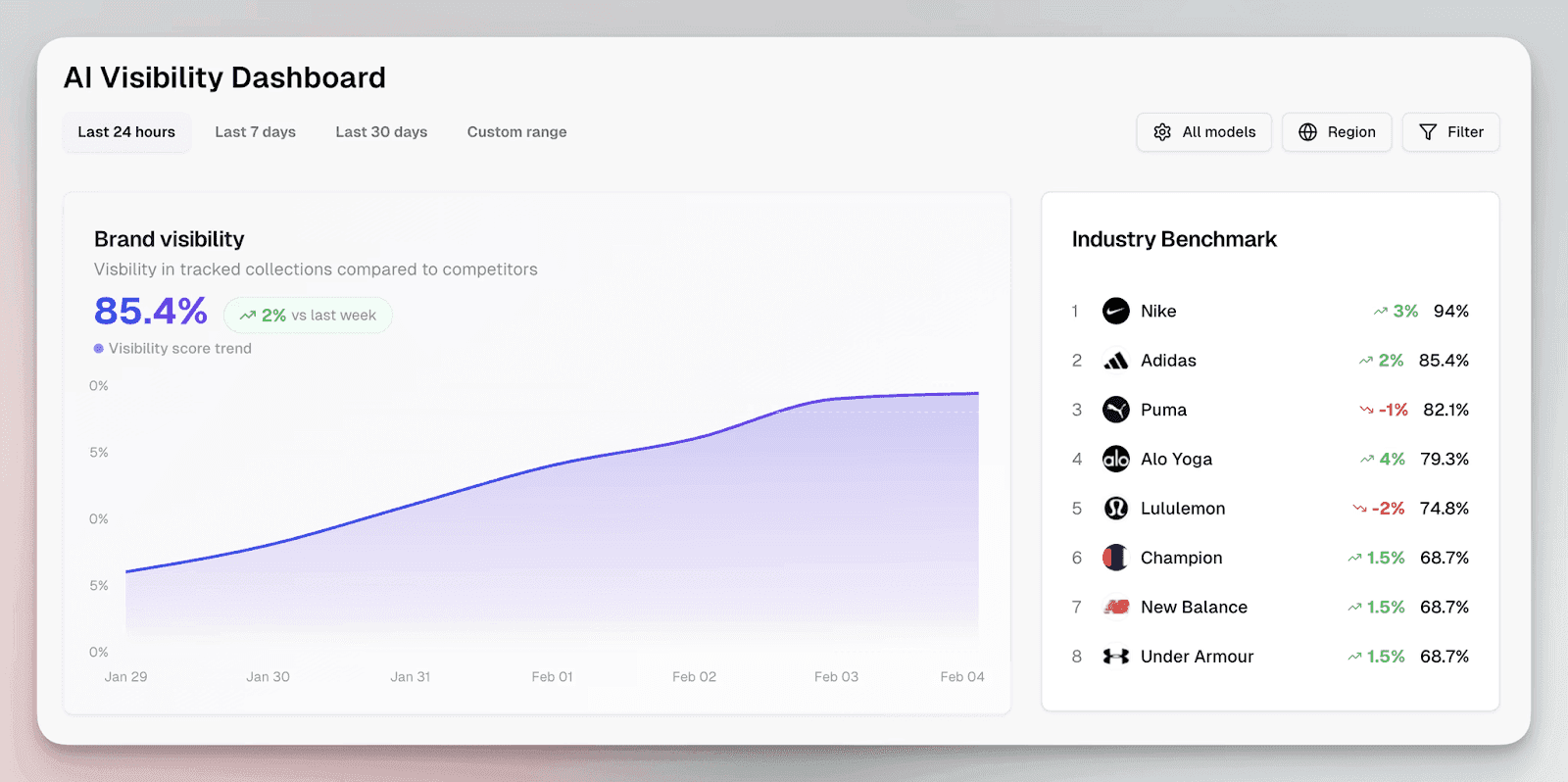

Step 2: Select your competitors: Erlin suggests up to five competitors. The important thing here: choose the brands you actually lose deals to. Not aspirational ones. Not the category giants you'd never realistically compete with. The brands your prospects mention during sales calls. Your visibility score only means something relative to who's showing up instead of you.

Step 3: Choose the prompts you want to track: Erlin suggests high-intent prompts based on your category, pulled from the kinds of queries buyers use during evaluation. These map closely to the "decision-stage" prompts described earlier: the ones that happen right before someone books a demo. You can customize the list or go with what's suggested.

Step 4: View your initial snapshot: You'll see your AI Visibility Rank, Traffic Rank, and a competitor comparison. The gap between your AI visibility rank and your traffic rank is the most important number to look at first. A strong traffic rank with a weak AI visibility rank is a warning sign. You're doing well in the channel that's declining and poorly in the channel that's growing.

Step 5: Connect Google Analytics and Search Console: This is where things get concrete. Once connected, you can see AI traffic versus non-AI traffic, conversion rates by source, and a breakdown by platform. Most teams discover that AI traffic represents 10–20% of overall volume but 30–40% of demos booked. That's the data point that gets the budget approved.

Step 6: Explore the Prompts section: This is the most useful part of the whole audit. You can see visibility trends per prompt, competitor comparison, and the actual answer history, meaning you can read what the AI said, whether your brand was mentioned, how it was described, and which sources it used.

If you find a gap, say you run "best CRM for SaaS startups" and you're not mentioned, you can look at who was mentioned and reverse-engineer it. Are those competitors listed on the same directories? Do they have comparison pages you don't? Are they cited in articles you're not in? That's your content roadmap.

Frequently Asked Questions

How is an AI visibility audit different from a standard SEO audit?

A standard SEO audit measures ranking positions, where you appear in a list of results. An AI visibility audit measures citation presence, whether you appear at all in an AI-generated answer. The distinction matters because AI systems don't return a ranked list. They synthesize an answer and name two or three brands. If you're not one of them, you get zero exposure for that query, regardless of your domain authority or keyword rankings.

How often should I run an AI visibility audit?

Monthly is the right cadence if you have the bandwidth. Quarterly at minimum. AI responses shift as models update, as new content gets indexed, and as competitors optimize their own presence. A snapshot from three months ago may not reflect what's happening today. Brands that monitor continuously detect errors in about 14 days. Brands that don't catch them in roughly 67 days, by which time the visibility damage has already compounded.

Do I need to optimize separately for ChatGPT, Gemini, and Perplexity?

There's significant overlap in what works across platforms. Fact density, structured content, and third-party citations help everywhere. But each platform has different weighting. Erlin's data shows that ChatGPT drives 91% of AI referral traffic, making it the clear priority. Perplexity comes in at 3%, Gemini at 2%. That doesn't mean ignoring the others, but it does mean your optimization priorities should reflect actual traffic share, not assumed parity.

Does ranking on page one of Google guarantee AI visibility?

No. Erlin tracked 500+ brands and found that traditional SEO ranking explains very little of AI citation rates. A brand can hold the top Google position and still be absent from ChatGPT's answer to the same question. The two systems use different signals. AI systems weigh fact clarity, structured data, and third-party validation more heavily than backlink volume or keyword density.

Can a smaller brand compete with a larger one in AI search?

Yes, and this is one of the more interesting aspects of how AI citation works. Erlin's analysis found that smaller brands with strong entity context and structured data regularly outperform Fortune 500 companies in specific query categories. AI systems don't default to the biggest brand; they default to the one with the clearest, most extractable information. A smaller brand that keeps its content fresh and its structured data clean can consistently outrank a larger competitor that hasn't optimized for AI at all.

What's the first thing I should fix after running an audit?

Start with fact density. If your product pages are heavy on marketing language and light on specific, verifiable claims, like the exact pricing, feature lists, comparison data, and integration specs, that's usually the fastest lever to pull. Follow it with third-party coverage: make sure you're listed on relevant review platforms, that your Reddit and community presence is active, and that you're earning editorial mentions in your category. Both of those can move your citation rate within weeks.

How much does AI visibility change over time without intervention?

It declines. Brands that don't update their content lose roughly 1.8% AI coverage per month. Content older than 12 months typically loses 20 or more coverage points. The flipside is also true: brands that update monthly see about 23% higher AI coverage than inactive brands. AI systems favor recency, and the staleness penalty is real.

Share

Related Posts

7 Best AI Content Strategy Tools for SEO & Content Teams

Compare the 7 best AI content strategy tools in 2026. Find which tools cover AI visibility, brief generation, and real-time optimization for your team.

Best GEO Tools for Ecommerce in 2026 (Compared)

Compare the best generative engine optimization tools for ecommerce. See which platforms track AI citations, optimize product data, and drive conversions.

ChatGPT SEO Workflows: Scale Content & Rankings (2026)

The ChatGPT SEO workflows that scale to 8+ pieces per week: keyword clustering, brief generation, technical SEO, and content refresh with quality controls.