What Is AI Visibility? A Guide to Brand Reach in AI Search

You search for "best [your product category]." ChatGPT gives a synthesized answer. It names four brands. Your competitor is in there. You're not.

That gap is AI visibility. For most brands right now, it's the most expensive problem they don't yet have a name for.

This guide covers what AI visibility is, why it matters, how AI platforms decide who to cite, and nine practical strategies to improve it.

What Is AI Visibility?

AI visibility measures how prominently a brand appears in AI-generated answers across ChatGPT, Perplexity, Google Gemini, and Claude.

When someone types "What's a good project management tool for remote teams?" into ChatGPT, which brands get named? That's an AI visibility question. The answer has nothing to do with click-through rate or domain authority. It depends on how AI systems identify, retrieve, and trust your content.

This is different from traditional SEO, which ranks pages based on links. AI systems skip the list and synthesize a direct answer. Erlin's research across 15,000+ purchase-intent prompts found that AI cites an average of 2.8 brands per response (Erlin data, 500+ brands tracked across ChatGPT, Perplexity, Gemini, and Claude, 2026).

If your brand isn't one of them, you're not eighth on the page. You're invisible for that query.

AI visibility is also binary per prompt: your brand is either in the answer or it isn't. There's no position four to work toward.

How Does AI Visibility Compare to Traditional SEO?

AI citation and search ranking use fundamentally different signals. A brand can rank first on Google and still be absent from ChatGPT's answer to the same question.

Traditional SEO | AI Visibility | |

Goal | Rank pages in search results | Get cited in AI-generated answers |

Key signals | Backlinks, keywords, page authority | Fact density, entity clarity, third-party validation, and content freshness |

Measured by | Rankings, organic traffic, CTR | Brand mention rate, citation frequency, share of voice, sentiment |

Where you appear | 10 blue links | Inside synthesized AI answers |

Competition | Top 10 results per query | 2–3 brands per AI answer |

That said, the two are not enemies. Strong organic content is still a prerequisite for Gemini citations, which correlate heavily with Google rankings. The difference is that AI visibility also requires entity clarity, structured data, fact-rich content, and third-party presence that SEO alone doesn't produce.

What Are the Two Types of AI Visibility?

Mention visibility is when an AI response includes your brand name but doesn't link to your site. ChatGPT says, "Tools like Acme and HubSpot are popular for this". You're named. There's no link. This is useful for brand awareness, but it doesn't send traffic.

Citation visibility is when the AI platform links directly to your website as a source. Perplexity numbers its references. Google AI Overviews links to source pages. This is the more valuable form. It signals trust to the reader and drives actual visitors.

Mention visibility | Citation visibility | |

What happens | Your brand name appears in the AI answer | AI links directly to your website as a source |

Traffic impact | None | Direct referral traffic to your site |

Conversion quality | Indirect; shapes brand perception before a visit | High-intent; visitors arrive already familiar with your brand |

Which platforms | ChatGPT (browsing off), most LLMs in parametric mode | Perplexity, Google AI Overviews, ChatGPT (browsing on) |

How to earn it | Brand awareness, Wikipedia presence, third-party mentions | Structured content, fact density, machine-readable schema |

Both types matter. Mention visibility feeds the parametric layer that drives future citations. Citation visibility is where to focus first.

Why Does AI Visibility Matter in 2026?

The purchase funnel now runs through AI. According to McKinsey's October 2025 AI Discovery Survey, 44% of AI search users say it's their primary source for product discovery, ahead of traditional search (31%), retailer websites (9%), and review sites (6%).

Erlin surveyed 200+ marketing leaders in 2026. The results are direct:

67% don't know how to measure their AI visibility

58% say no one at their company owns it

52% lack the time or resources to address it

Only 18% have an active strategy

(Erlin survey, 200+ marketing leaders, 2026)

The channel works. Measuring it is a different problem entirely.

AI-referred traffic converts at 3x-6x the rate of traditional organic search (Erlin client data, 2026). People using AI to research purchases are close to a decision. By the time they click through to your site, they've already read an AI-synthesized case for why you're worth considering.

The first-mover effect is real. The gap between AI visibility winners and losers is 9x today and widening 3.2% every month (Erlin data, 2026). Brands that establish AI visibility early gain citation authority that gets harder to replicate the longer competitors wait.

Where Does Your Brand Stand? The AI Visibility Ladder

Erlin's research across 500+ brands identified five tiers of AI visibility based on prompt coverage across ChatGPT, Perplexity, Gemini, and Claude.

Tier | Coverage | What it looks like |

AI Invisible | 0–15% | Fewer than 3 verifiable facts, no third-party validation, and content older than 18 months |

AI Fragile | 15–35% | 3–4 detectable facts, fewer than 25 reviews, inconsistent monthly presence |

AI Present | 35–60% | 5–7 structured facts, 25–75 reviews, regular citations |

AI Preferred | 60–80% | 8+ structured facts, 50+ reviews, active Reddit participation, FAQ schema, frequent top-3 placement |

AI Dominant | 80%+ | 10+ structured facts, Wikipedia presence, 100+ reviews, weekly content updates, zero detectable errors |

50% of brands score below 35% prompt coverage across the four major AI platforms (Erlin data, 2026). Most brands sitting in the bottom two tiers don't know it.

How Do AI Platforms Decide Who to Cite?

Each platform has its own sourcing logic, and they don't overlap much.

Google Gemini behaves most like a traditional search engine. It maintains a strong correlation with organic rankings. E-E-A-T signals, structured data, and Knowledge Graph presence are the primary factors.

Perplexity is retrieval-first. Every query triggers a real-time web search. It rewards technical clarity, statistical content, and community validation. Reddit is its leading source.

ChatGPT runs on two systems: training data and real-time Bing search when browsing is enabled. With browsing off, it answers from the training data. With browsing on, it follows Bing's top results. Brand search volume is a strong predictor of recall.

Platform | Data source | Key visibility signal |

Google Gemini | Live index + Knowledge Graph | E-E-A-T, schema, organic ranking |

Perplexity | Real-time retrieval (200B+ URLs) | Technical depth, stats, Reddit presence |

ChatGPT | Training + Bing (when browsing on) | Brand awareness, Wikipedia, directories |

Claude | Parametric + web search | Long-form authoritative content |

What Makes AI Cite a Brand?

Erlin's analysis of 500+ brands over six months of tracking identified four factors with the strongest correlation to AI citation rates. Together, they explain 89% of AI visibility variance (Erlin data, 2026).

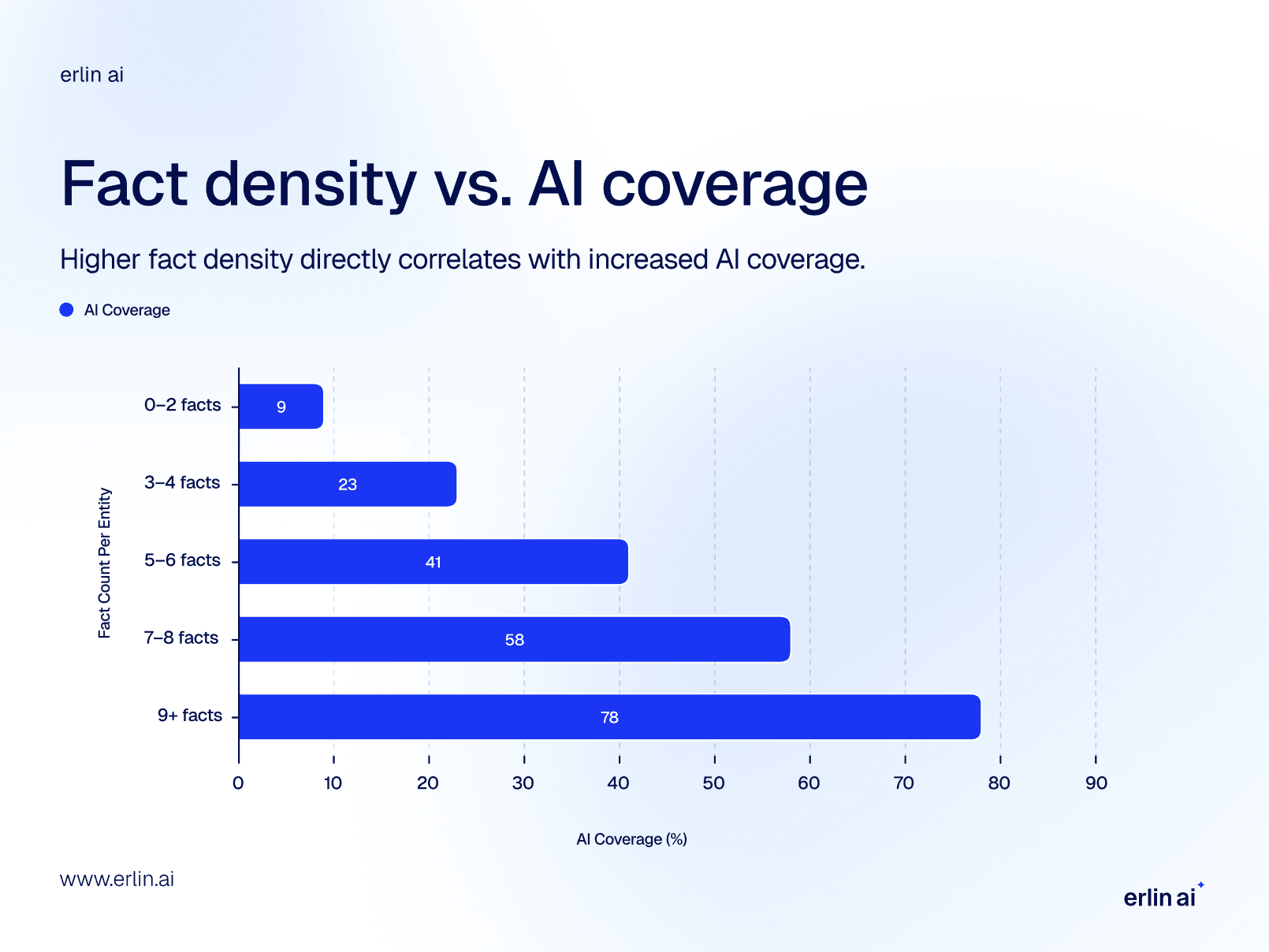

Fact Density

Brands with 9+ structured facts, such as pricing, features, use cases, and specifications, appear in 78% of relevant AI answers. Brands with 0–2 facts appear in 9% of cases.

Facts per brand profile | AI coverage |

0–2 facts | 9% |

3–4 facts | 23% |

5–6 facts | 41% |

7–8 facts | 58% |

9+ facts | 78% |

(Erlin data, 2026)

Brands with 8+ structured attributes get cited 4.3x more than brands with fewer than 3 (Erlin data, 2026). AI doesn't process marketing language. It extracts discrete facts. "Industry-leading solution" is not citable. "Processes up to 10,000 records per hour" is.

Source Authority

68% of AI citations come from third-party sources. Only 32% come from brand-owned websites (Erlin data, 2026).

Source type | Citation lift | Freshness requirement |

Reddit discussions | 3.4x higher | Under 6 months |

Wikipedia | 2.9x higher | Persistent |

Review platforms (G2, Capterra) | 2.6x higher | Under 12 months |

YouTube | 2.1x higher | Persistent |

Owned content only | Baseline (1.0x) | Under 12 months |

What others say about your brand is the primary input AI uses to form an opinion about it.

Structured Data

Machine-readable formats drive meaningful coverage lift fast.

Format | Coverage lift | Typical time to impact |

Comparison tables | +34% | 14 days |

llm.txt file | +32% | 14 days |

FAQ schema | +28% | 21 days |

(Erlin data, 2026)

Static HTML with schema markup has a 94% AI parsing success rate. JavaScript-rendered content has a 23% success rate (Erlin data, 2026).

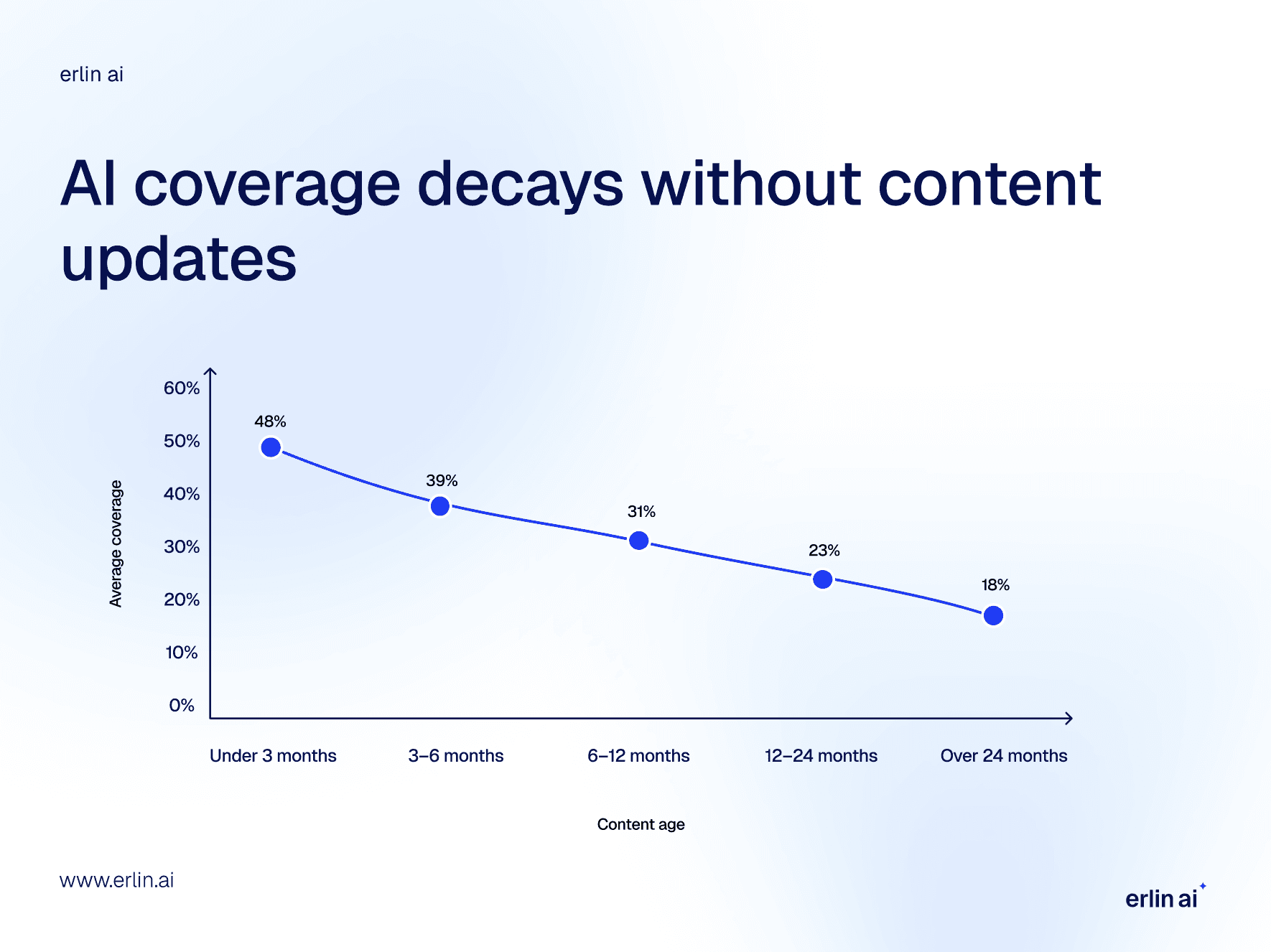

Content Recency

AI systems continuously re-evaluate brand information. Brands updating content monthly see 23% higher AI coverage than brands with stale content (Erlin data, 2026). The staleness penalty is 1.8% coverage lost per month (Erlin data, 2026).

Content age | Average AI coverage |

Under 3 months | 48% |

3–6 months | 39% |

6–12 months | 31% |

12–24 months | 23% |

Over 24 months | 18% |

9 Strategies to Improve Your AI Visibility

1. Build Out Your Entity Footprint

AI starts by retrieving facts from knowledge graphs and entity records. A brand consistently defined across multiple sources is cited more often than one that isn't.

Establish a consistent presence across the sources AI pulls from first:

Create or claim your Wikidata entry.

Add Organization schema markup with sameAs links to Wikidata, LinkedIn, and Crunchbase.

Claim your Google Knowledge Panel and correct any inaccurate attributes.

Make sure every public profile uses the same brand description, category, and founding information.

Inconsistent data across sources creates entity confusion that suppresses citation rates.

2. Write Content AI Can Extract From

AI breaks content into chunks and pulls the most self-contained, factual passages. Long paragraphs requiring sequential reading are hard to extract from. Short, direct, self-contained sections are easy.

Lead every section with a direct answer in 40–60 words.

Use descriptive subheadings, where each heading names exactly one idea.

Write each section so it can be read without context from what came before.

Add comparison tables, FAQ sections, and definition blocks.

These are the formats that appear repeatedly in Erlin's high-citation brand profiles.

3. Pack More Verifiable Facts Into Your Content

Every page describing your product should answer: what it costs, what it does specifically, who it's for, how long it takes to set up, what it integrates with, and what customers have measured after using it.

Run a quick audit of your key pages.

Is pricing publicly accessible without a form?

Are features listed in scannable formats, not buried in paragraphs?

Is your competitive positioning explicit, not implied?

Are key claims backed by specific numbers or named references?

Is operational information easy to find?

Brands with two or more "no" answers typically show limited AI coverage.

4. Build Third-Party Validation Deliberately

Since 68% of AI citations come from third-party sources, actively pursue the placements that carry the most weight.

Target industry roundup articles. Being listed alongside recognized category leaders signals to AI that you belong in the same group.

Earn reviews on G2, Capterra, and Trustpilot.

Secure expert quotes in trade publications.

Get listed in relevant directories with full, accurate brand descriptions.

Reddit discussions under six months old carry the highest citation lift at 3.4x but require ongoing freshness. Wikipedia carries 2.9x lift and is persistent. Review platforms carry a 2.6x lift but must be under 12 months old (Erlin data, 2026).

5. Show Up in Community Platforms

Reddit, Wikipedia, and YouTube together represent roughly 51% of AI citation sources in Erlin's analysis (Erlin data, 2026). Community-validated content carries credibility signals that owned promotional content doesn't.

Build a genuine presence in three to five subreddits where your buyers ask questions.

Answer with depth, not pitches. High-karma contributions feed directly into Perplexity's retrieval.

Publish LinkedIn articles on questions your buyers are asking AI.

Create YouTube explainers on high-volume queries in your category.

The goal is to be genuinely present in the conversations AI is reading.

6. Fix What's Blocking AI Crawlers From Reading Your Site

Good content that AI can't access gets zero credit.

Audit your robots.txt to confirm AI crawlers, such as GPTBot, ClaudeBot, PerplexityBot, and Google-Extended, aren't blocked. Some CMS platforms add aggressive bot blocks by default.

Move critical information out of JavaScript-rendered components.

Validate your structured data using Google's Rich Results Test.

Add an llm.txt file to your site root to communicate your content structure to AI crawlers.

7. Invest in E-E-A-T Signals, Especially the Human Ones

AI systems evaluate citation-worthiness using signals, such as author bios with LinkedIn URLs and domain credentials, expert quotes from named people with real titles, case studies with specific numbers, and citation links for every factual claim. Each of these signals is a concrete action, not a general recommendation.

8. Build a Topic Cluster Around Your Core Subject

AI doesn't evaluate individual pages in isolation. It evaluates how thoroughly a brand covers a topic. A brand with a foundational definition page, subtopic guides, comparison content, FAQ pages, and case studies gets cited across a wider range of related prompts than a brand with one strong page.

Map your core topic into a connected cluster. Link everything explicitly. Refresh cluster content on a rolling schedule. Content coverage drops from 48% for pages under three months old to 18% for pages over two years old (Erlin data, 2026). Stale pages weaken the whole cluster, not just themselves.

9. Monitor AI Citations the Way You Monitor Search Rankings

AI citation patterns shift month to month. Brands lose roughly 1.8% AI coverage per month when content isn't refreshed (Erlin data, 2026). A platform algorithm shift, a competitor earning a high-authority mention, or a schema error can each move your brand mention rate noticeably within a week.

Monitored brands detect AI errors in 14 days on average. Unmonitored brands take 67 days on average to discover errors (Erlin data, 2026). That's a 79% faster detection rate for brands that track.

Frequently Asked Questions

We rank on page one of Google. Why aren't we showing up in AI answers?

Google ranking and AI citation have a weak correlation. Erlin tracked 500+ brands and found that traditional SEO rank explains little of why a brand gets cited in AI responses (Erlin data, 2026). AI engines weigh entity clarity, content freshness, fact density, and third-party validation. A brand can rank first on Google and be absent from ChatGPT's answer to the same question because the two systems use fundamentally different signals.

Does high domain authority guarantee AI citations?

No. Erlin found that focused brands with a domain authority under 20 consistently outperform Fortune 500 companies in specific query categories (Erlin data, 2026). What earns a citation slot is factual clarity and content freshness, not domain size.

Why is my brand appearing in some AI platforms but not others?

Each platform has different sourcing logic. Gemini favors brands with strong organic rankings on Google. Perplexity rewards statistical content and community validation through sources like Reddit. ChatGPT is influenced by brand search volume and Wikipedia presence. Tracking brand visibility separately per platform is necessary since cross-platform performance varies significantly.

What is Share of AI Voice?

Share of AI Voice is your brand's percentage of all AI citations within your competitive set, for a defined set of prompts. If you and three competitors are collectively cited 100 times across a prompt set and your brand appears 22 times, your Share of AI Voice is 22%. It is tracked across ChatGPT, Gemini, Perplexity, Claude, and Copilot to give a cross-platform visibility score.

How long does it take to improve AI visibility?

It depends on what you're fixing. Technical fixes associated with structured data implementation or entity linking typically show measurable citation movement within one to two weeks. Comparison tables drive 34% higher AI coverage within 14 days of implementation (Erlin data, 2026). Content restructuring shows results within 30 days. Third-party and PR work compounds over two to four months.

Can smaller brands compete with large enterprise players in AI search?

Yes. Erlin's analysis shows smaller brands with strong entity context and structured data routinely outperform larger competitors in specific query categories (Erlin data, 2026). AI doesn't default to the biggest brand. It defaults to the clearest one.

Where to Start

Run an AI visibility audit. Erlin's free audit shows your Brand Mention Rate, which platforms are citing you, how competitors compare, and what your AI coverage score is across high-intent prompts in your category.

From there, identify the type of gap. Is it technical? (crawlers can't access your content?) Structural? ( facts are buried in prose?) Third-party? (Is your brand absent from Reddit, review sites, and media?) Entity? (AI systems aren't sure what you do?) Each type has a different fix.

Get Your AI Visibility Score → app.erlin.ai/get-started

Share

Related Posts

Generative Engine Optimization Trends for 2026

7 GEO trends reshaping AI search visibility in 2026, with data on citations, structured data, and what actually drives brand coverage.

15 Generative Engine Optimization Best Practices Backed by Latest Research

Generative engine optimization best practices backed by 2026 research. 15 tactics to get your brand cited by ChatGPT, Perplexity, and Google AI Overviews.

AI for Content Planning & Strategy: A Complete Guide

AI for content planning in 2026 means planning for AI search visibility, not just faster drafts. Five-layer workflow, what to automate, what stays human.