LLM tracking tools tell you when AI mentions your brand, how it describes you, which prompts surface your competitors instead, and what sources are driving those results.

The category is also crowded and confusing right now. Some tools count mentions and stop there. Others go deep on accuracy, competitive benchmarking, source attribution, and execution.

"LLM tracking tool" means genuinely different things depending on who's selling it, and the wrong choice wastes months of data collection you'll never be able to use.

This review covers five platforms that matter for marketing teams in 2026. What each does well, where it falls short, and which type of team it's built for.

The best LLM tracking tools at a glance

Tool | Platforms | Starting price | Best for | Free trial |

Erlin | ChatGPT, Perplexity, Gemini, Claude | $97/month | Track + act: growth teams, agencies | Yes |

Profound | 3 on Growth, 10+ on Enterprise | $399/month | Enterprise analytics, Prompt Volumes | No |

Scrunch AI | 7+ | $300/month | Brand accuracy, agent traffic | 7-day |

Peec AI | ChatGPT, Perplexity, AI Overviews + add-ons | €89/month | International, 115+ languages | No |

Otterly AI | ChatGPT, Perplexity, Gemini, Claude, AI Overviews | $29/month | Quick setup, share-of-voice baseline | No |

What to look for in an LLM tracking tool

Before the reviews, a few things that separate a useful tool from a dashboard that collects data nobody acts on.

Platform coverage

ChatGPT and Perplexity are obvious starting points. But Google AI Overviews sits inside the world's most-used search engine, Gemini is growing fast in Google Workspace accounts, and Claude is increasingly where technical buyers do their research. A tool covering two platforms is showing you a partial picture and calling it complete.

Prompt-level visibility

Aggregate visibility scores work for a board slide. They're useless for knowing which content to create next. You need to see which specific prompts surface your brand and which ones give the answer to a competitor.

Source attribution

AI answers pull from Reddit threads, G2 reviews, Wikipedia, blog posts, and brand-owned pages. Knowing which sources drive your citations tells you exactly where to invest. Without it, you're optimizing blind.

Accuracy, not just presence

A mention isn't always a win. AI hallucinates. It quotes wrong pricing, attributes competitor features to your product, and invents integrations that don't exist. Tools that count every mention as positive are giving you a misleading picture at the exact moment you need clarity.

An action path

Visibility data has no value sitting in a dashboard. The useful tools connect what you find to what you do next.

The 5 Best LLM Tracking Tools in 2026 (Comparison & Review)

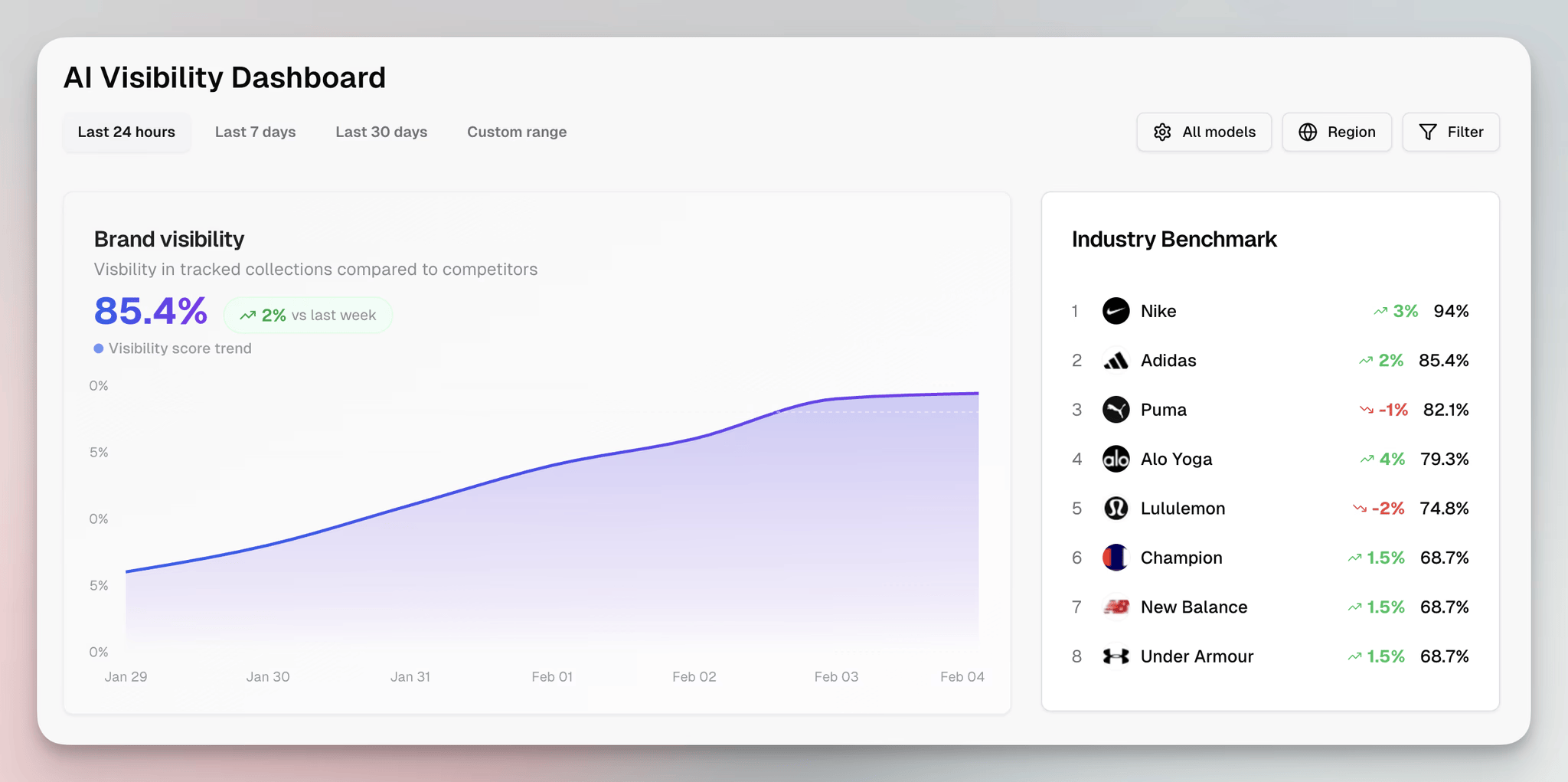

1. Erlin: best for teams that need to track and act

Erlin tracks brand visibility across ChatGPT, Perplexity, Gemini, and Claude, then connects that data to execution. Most monitoring platforms stop at the dashboard. Erlin builds the bridge between "here's what AI says about you" and "here's what to publish this week."

The platform works around three pillars: Insights, Opportunities, and Actions. Insights covers visibility data, which prompts surface your brand, how you're described, sentiment, share of voice against competitors.

Opportunities identifies specific gaps and ranks them by potential impact using Erlin's proprietary Fire Score. Actions converts those gaps into workflows: brief creation, content drafts, FAQ schema, structured answers, with a human in the loop at each stage.

The source intelligence layer is worth calling out. Erlin maps which domains AI platforms draw from when answering questions in your category: Reddit discussions, review platforms, Wikipedia, press coverage, partner sites.

That tells you exactly where to focus earned media and content efforts. Brands with 8+ structured attributes across those source types get cited 4.3x more than brands with fewer than 3. (Erlin data, 2026)

It integrates with Google Analytics 4, Search Console, Shopify, and Slack, which means AI referral traffic gets attributed, not just observed. When you can show AI-referred visitors converting 3x better than traditional organic, the investment in visibility work becomes something you can actually defend to finance.

The benchmark data comes from 180-day continuous monitoring of 500+ brands across four AI platforms. That dataset is what powers the Fire Score prioritisation.

It's also what separates Erlin's recommendations from generic content advice: the suggestions are grounded in what has actually moved citations across a large brand sample.

Pricing: Standard $97/month, Pro $249/month, Enterprise custom.

Best for: Growth teams, content teams, and agencies that need visibility tracking connected to execution. Strong fit for SaaS brands where appearing in AI-generated comparisons directly affects pipeline.

Worth knowing: Higher tiers are needed for multi-brand or agency-scale management. The execution layer means slightly more setup than a pure monitoring tool.

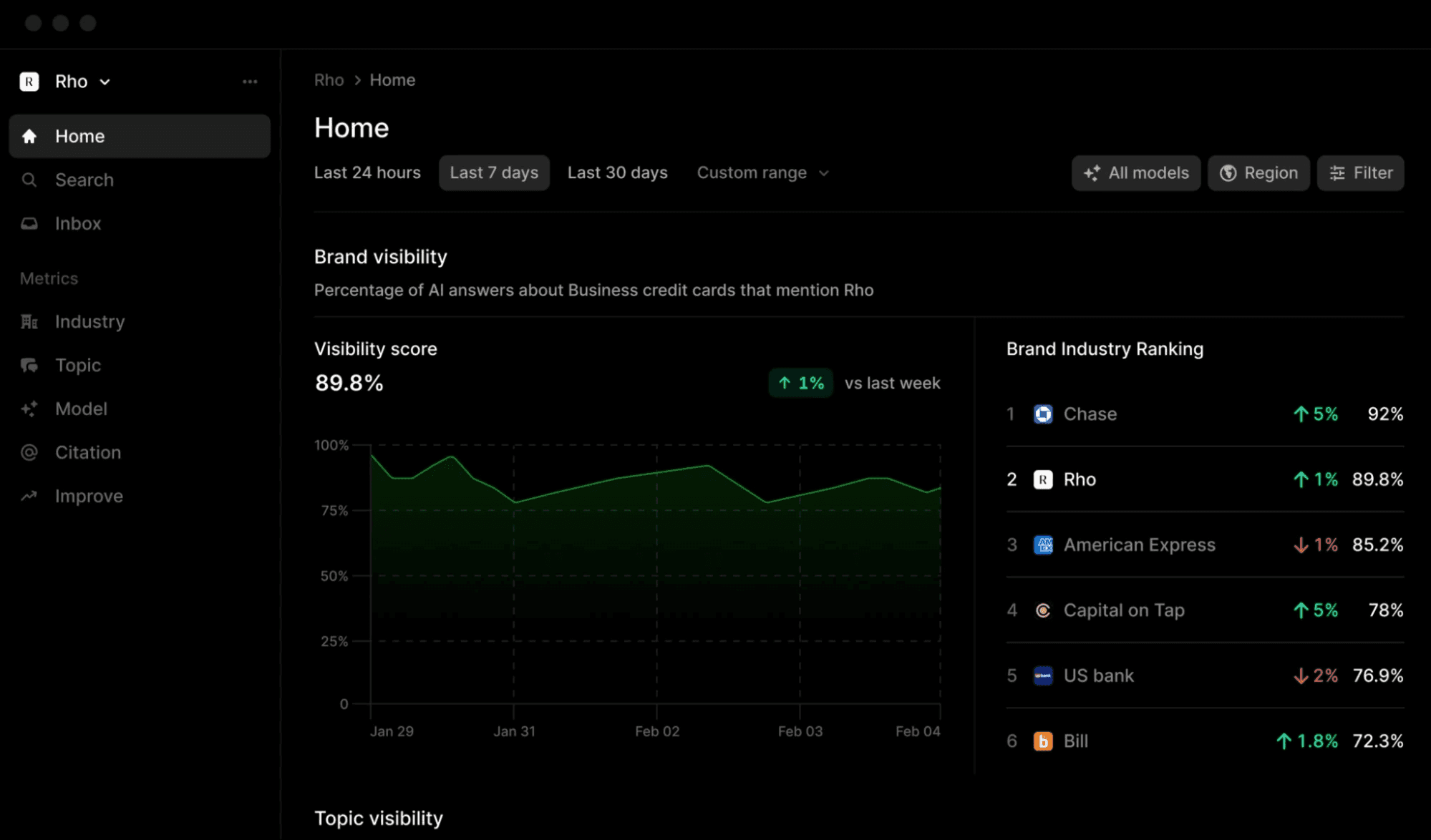

2. Profound: best for enterprise depth

Profound is the most funded platform in this category: $58.5 million raised, backed by Sequoia, Kleiner Perkins, and NVIDIA. That investment shows in the product. This is the deepest analytics platform available for brands that need statistically reliable, enterprise-grade AI visibility data.

The standout is Prompt Volumes. No other tool shows actual AI search demand by topic: the real frequency with which users ask AI platforms about subjects in your category, with demographic breakdowns by region, age, and income. It's keyword research for AI search, and nothing else does it at scale.

The platform tracks 10+ AI engines on Enterprise plans: Grok, Meta AI, DeepSeek, Amazon Rufus, alongside the standard four. Coverage at that breadth matters for Fortune 500 brands managing visibility across the full AI discovery ecosystem.

Profound's customer list is real validation: Ramp, Figma, DocuSign, MongoDB, Zapier, Walmart, and LG. Ramp's 7x AI visibility increase is the most-cited case study in the AEO category. G2 Winter 2026 Leader with 140+ verified reviews.

The pricing reality deserves honesty. The Lite plan at $499/month tracks ChatGPT only with 50 prompts. The Growth plan at $399/month adds Perplexity and Google AI Overviews, but Claude, Gemini, Grok, and full platform coverage require Enterprise at $2,000–5,000+/month.

There's no free trial and no self-serve signup. The gap between Growth and Enterprise is where most mid-market teams hit a wall.

If the budget is there, Profound is the category leader for a reason. If it isn't, several alternatives offer 7+ platform coverage at a fraction of the cost.

Pricing: Growth $399/month (3 platforms), Enterprise $2,000–5,000+/month. No free trial.

Best for: Fortune 500 brands, large enterprises, and agencies managing enterprise clients who need SOC 2 compliance, Prompt Volumes data, and full multi-platform coverage.

Worth knowing: The $399 Growth plan is the real comparison price for evaluation purposes. Full enterprise value (Prompt Volumes, unlimited seats, API access) requires custom Enterprise pricing. Budget realistically before the demo call.

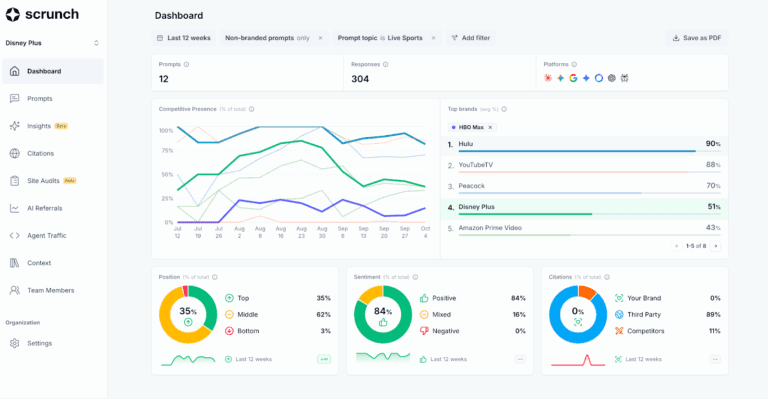

3. Scrunch AI: best for brand accuracy and agent traffic

Scrunch takes a different angle. Where most LLM tracking tools focus on how often you appear, Scrunch focuses on the gap between how AI represents your brand and how you want to be represented. Those are different problems, and Scrunch is built for the second one.

The Knowledge Hub is the most distinctive feature in this category. It identifies when AI is pulling from outdated sources or generating inaccurate information about your brand, surfacing the specific content or third-party pages responsible for wrong answers.

For brands where a misattributed feature or wrong pricing figure is losing demos, that accuracy layer isn't a nice-to-have.

Scrunch also offers an Agent Experience Platform that creates an AI-optimized version of your site for agent traffic. Crawler analytics track how GPTBot, ClaudeBot, and PerplexityBot access your content: which pages they visit, how often they return, and how crawler activity translates to real user referrals. Most monitoring tools don't touch this level.

The segmentation is strong. Scrunch automatically tags prompts by intent, funnel stage, and branded/unbranded classification, without manual setup. That means filtered reports are built in, not assembled from exported spreadsheets.

Users on r/seogrowth: "I tried the Otterly plugin for Semrush, it was useless. Scrunch isn't cheap, but I see this as a tool I'm only going to use more."

Pricing: Starts at $300/month (350 prompts). Demo required for Enterprise.

Best for: Enterprise content teams and agencies where brand accuracy in AI responses is the primary concern. Strong fit for teams managing AI crawler traffic at scale.

Worth knowing: Drill-down within individual reports is limited. If you need granular prompt-level data exports alongside the category-level views, you'll hit friction. The price point is a real barrier for smaller teams.

4. Peec AI: best for multi-language and multi-market coverage

Peec AI is a Berlin-based platform backed by €29 million in funding. One feature separates it from everything else in the category: 115+ language support with multi-country tracking, daily prompt refresh across all plans. Nothing else is close on international breadth.

For global brands managing AI visibility across European, APAC, and Latin American markets, that coverage closes a gap that most tools don't acknowledge exists. A US-centric monitoring tool tracking five platforms in English is showing a narrow slice of where AI discovery actually happens for an international brand.

The platform distinguishes between explicit brand mentions and silent source citations: cases where AI synthesises from your content without naming you.

Share of Voice analytics break down by prompt category and by individual AI platform. All plans include unlimited seats, which matters more than it looks for mid-size teams where multiple people need access.

Wix's published result is the clearest evidence of effectiveness in practice: a 5x year-over-year increase in traffic and demo requests from LLMs, attributed to identifying exactly which content was being surfaced in specific AI platforms.

Limitations are worth naming. Reddit users have flagged that prompt setup is manual, insights can feel thin without a dedicated analyst working the data, and the interface can feel brittle.

The Starter plan at €89/month is also higher than most entry-level alternatives, which makes it a hard sell for teams still evaluating whether AI visibility tracking is worth the investment.

Pricing: Starter €89/month (~$95) for 25 prompts. Higher tiers on request.

Best for: Global brands requiring multi-language and multi-country coverage. EU companies prioritising GDPR compliance. Teams with dedicated analysts.

Worth knowing: Not all AI platforms are included in base plans. Grok and some others require add-ons. Prompt discovery is manual; the tool doesn't automatically surface tracking opportunities the way some competitors do.

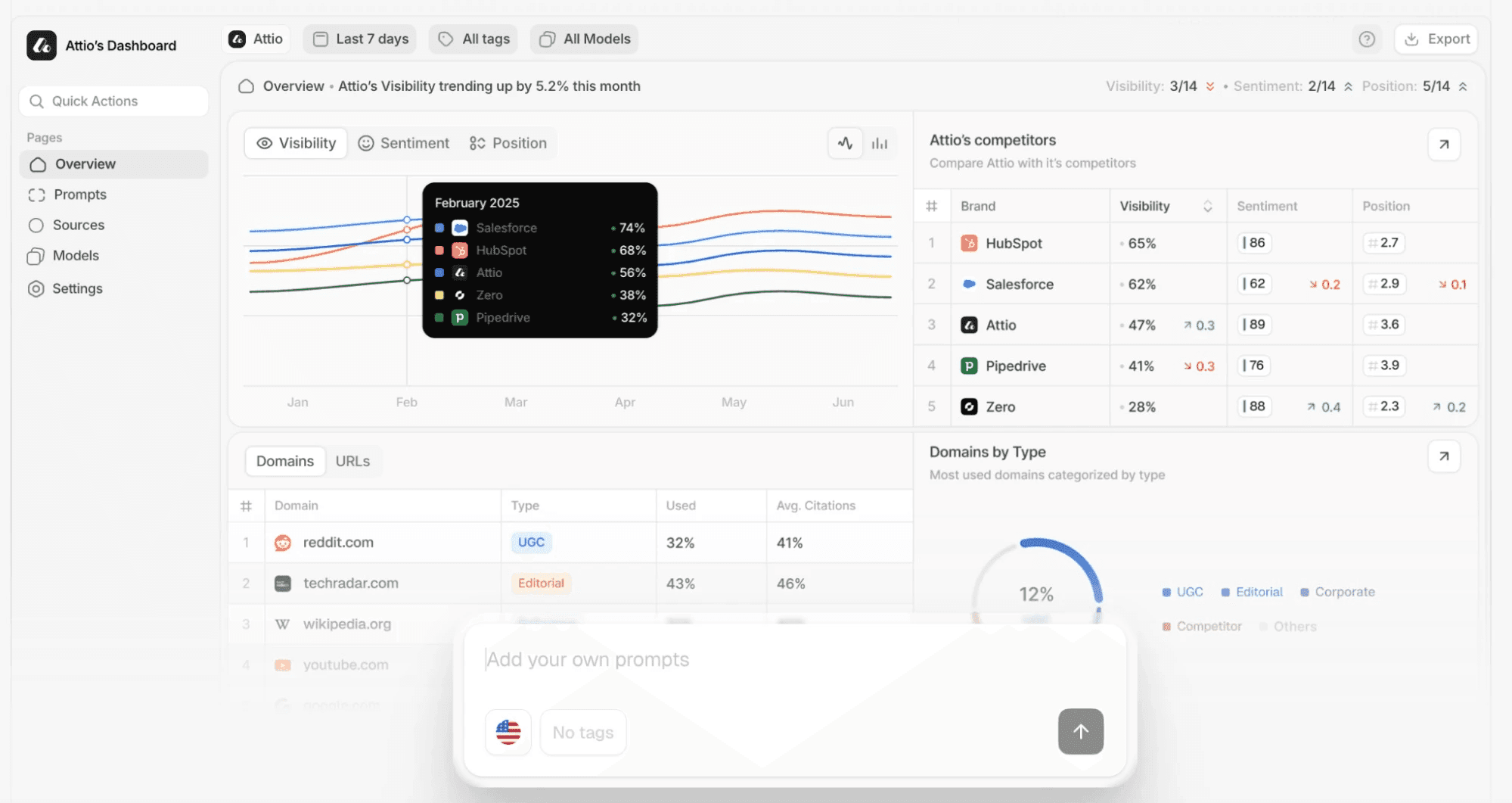

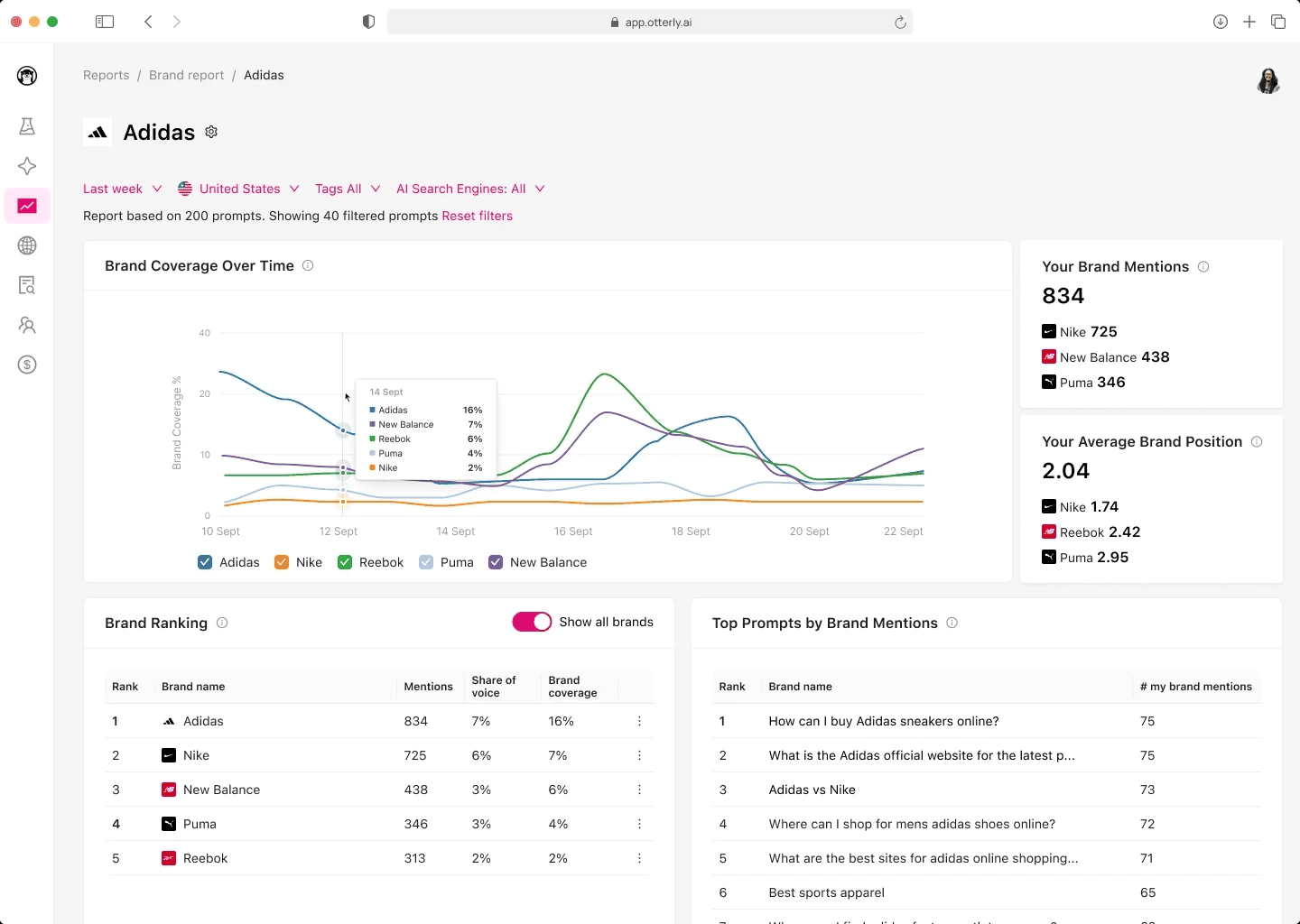

5. Otterly AI: best for getting started fast

Otterly was one of the first tools built specifically for monitoring AI model responses, and it keeps an advantage that matters for teams new to the category: signup to first visibility data in under ten minutes.

The Share of AI Voice metric is the clearest differentiator. It quantifies the percentage of relevant AI responses that mention your brand versus competitors. For teams that need one number to show leadership, that metric cuts through complexity quickly.

Coverage spans ChatGPT, Google AI Overviews, Perplexity, Gemini, and Claude. The Lite plan at $29/month is the lowest entry point in the category by a wide margin.

The core limitation is honest: Otterly counts mentions. It doesn't evaluate whether the content of those mentions is accurate. A response with wrong pricing logs as a positive brand mention. That's not a bug; it reflects what Otterly was designed to solve, but teams where accuracy matters will hit that ceiling.

Reporting has constraints too. Single-tag filtering means building multi-segment reports requires exporting spreadsheets and combining them manually. Sentiment analysis sits at the individual prompt level, not at the aggregate view. These friction points grow as the AI visibility strategy matures.

The Lite-to-Standard pricing jump is steep: $29 to $189. Most teams tracking a real brand need more than 15 prompts, so budget for Standard from the start if you're serious about the data.

Pricing: Lite $29/month (15 prompts), Standard $189/month (100 prompts), Premium $489/month (400 prompts).

Best for: Marketing teams at the start of their AI visibility journey. Agencies running initial client audits. Teams that want fast setup and a clear share-of-voice baseline without complexity.

Worth knowing: Factor in the Standard tier cost from day one. Otterly is an excellent starting tool; it's harder to use as a long-term strategic platform as requirements grow.

Which tool fits your situation

You're a growth or content team that needs to track AI visibility and act on it without hiring more people: Erlin connects insight to execution. The brief creation, content workflows, and Fire Score prioritisation do the work between data and publishing.

You're at a Fortune 500 company with a dedicated search intelligence function and a real budget: Profound is the category leader. Prompt Volumes and SOC 2 compliance matter at that scale, and nothing else delivers both.

Brand accuracy is the priority (wrong pricing, hallucinated features, misattributed claims hitting your pipeline): Scrunch's Knowledge Hub is built for exactly that problem.

You operate across multiple countries and languages: Peec AI's 115+ language coverage is genuinely in a category of its own.

You're testing the water and need to see what AI says about your brand before committing to a platform: Otterly's Lite plan at $29/month is the lowest-friction starting point.

One timing note: the gap between AI visibility winners and losers is 9x today and widening 3.2% every month. (Erlin data, 500+ brands, 2026) Early monitoring data becomes the baseline that informs everything else in your GEO strategy. The tool matters less than starting.

Frequently Asked Questions

What is an LLM tracking tool?

An LLM tracking tool monitors how AI platforms like ChatGPT, Perplexity, Gemini, and Claude mention, describe, and recommend your brand in their responses. It tracks brand mentions, share of voice, citation sources, sentiment, and prompt-level visibility, giving marketing teams the same kind of data for AI answers that Google Search Console gives them for organic search results.

How is LLM tracking different from traditional SEO monitoring?

Traditional SEO tools track keyword rankings in a list of blue links. LLM tracking monitors synthesis: whether your brand appears inside AI-generated answers to conversational queries. The mechanics are structurally different. AI doesn't rank pages; it selects brands based on fact density, source authority, structured data, and content freshness. 68% of AI citations come from third-party sources, not brand-owned websites. (Erlin data, 2026) Traditional SEO monitoring misses all of it.

How many AI platforms should I track?

At minimum: ChatGPT, Perplexity, Google AI Overviews, and Gemini. These four cover the majority of purchase-intent AI queries for most B2B and e-commerce brands. Claude is growing fast among technical buyers. The honest shortcut: ask your sales team which AI tools come up in discovery calls. Start tracking those.

How quickly does AI visibility data change?

High-traffic prompts churn at 23% month-over-month. Long-tail prompts churn at 8%. (Erlin data, 2026) That's why continuous tracking beats periodic audits for brands running an active AI visibility program. One-time audits are useful for diagnosing your starting position. Ongoing monitoring tells you whether the changes you make are actually working.

Can I just use my existing SEO platform for LLM tracking?

Semrush and Ahrefs have added AI visibility modules. They're useful if your team already lives in those platforms and wants a single-tool view. The limitation is depth; AI visibility sits as a secondary layer on infrastructure that wasn't built for it. Dedicated LLM tracking tools were designed from the ground up around AI answer analysis, which typically means better prompt-level data, more granular source attribution, and faster product development as the category evolves. If AI visibility is a strategic priority, a dedicated tool will get you further.

Get Your Free AI Visibility Score (see where your brand stands across ChatGPT, Perplexity, Gemini, and Claude with Erlin's free audit)

Share

Related Posts

Generative Engine Optimization Trends for 2026

7 GEO trends reshaping AI search visibility in 2026, with data on citations, structured data, and what actually drives brand coverage.

15 Generative Engine Optimization Best Practices Backed by Latest Research

Generative engine optimization best practices backed by 2026 research. 15 tactics to get your brand cited by ChatGPT, Perplexity, and Google AI Overviews.

AI for Content Planning & Strategy: A Complete Guide

AI for content planning in 2026 means planning for AI search visibility, not just faster drafts. Five-layer workflow, what to automate, what stays human.