AI visibility is quickly becoming as important as traditional SEO, but most teams don’t know how to track it.

This 2026 guide breaks down how to track AI visibility across tools like ChatGPT, Perplexity, and Gemini, with a clear, practical framework you can start using today.

What Is AI Search Tracking?

AI search tracking measures how often your brand appears in AI-generated responses across platforms like ChatGPT, Perplexity, Gemini, and Claude.

It tells you which prompts surface your brand, how accurately you're described, where competitors appear instead of you, and whether that picture is improving or getting worse over time.

Traditional SEO tracking measures rankings. AI visibility tracking measures citations. The difference matters because AI answers don't show a list of ten blue links and let the user decide.

They pick 2–3 brands and recommend them directly. If your brand isn't in that set, you're not ranked lower; you're absent entirely.

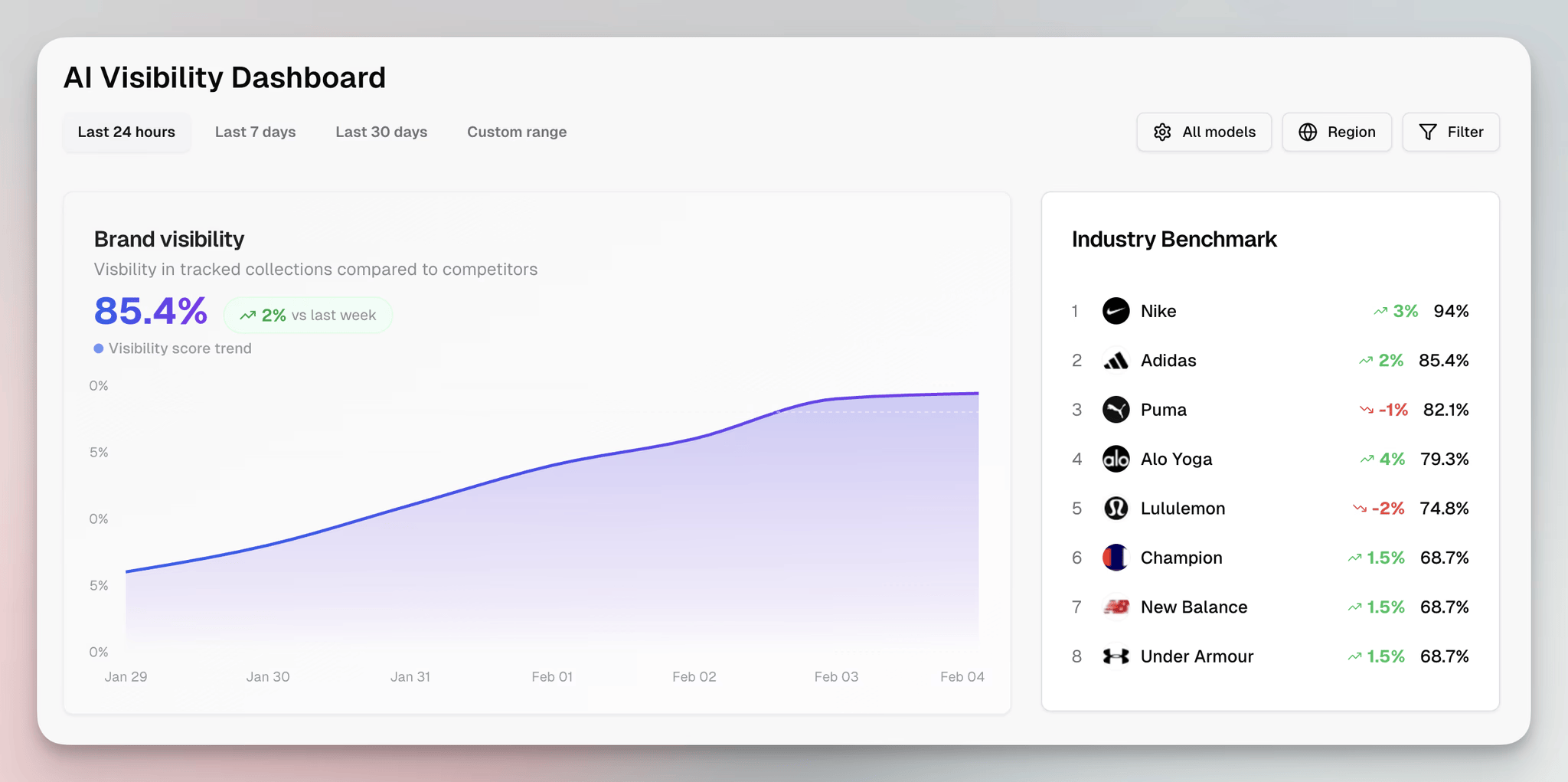

Erlin is built specifically for this. It tracks brand appearances across the four major AI platforms, runs purchase-intent prompts automatically, benchmarks your coverage against competitors, and flags errors before they compound.

Most importantly, it connects what you're tracking to content actions you can take to fix gaps. More on that setup below.

Major AI Search Platforms to Track

Not all AI platforms behave the same way or send the same kind of traffic. Tracking them together as one number hides what's actually happening.

ChatGPT generates roughly 91% of all AI referral sessions, according to Erlin's analysis of referral data across platforms. It names sources and links out to websites, which means a citation here has real traffic attached to it. This is where to focus first.

Perplexity accounts for about 3% of AI referral traffic. It provides cited, source-linked answers similar to ChatGPT but at a fraction of the volume. It's growing, and it's worth tracking separately rather than lumping it into an aggregate.

Gemini sits at roughly 2% of AI referral traffic as a standalone chatbot. Google AI Overviews use the same underlying models but are tracked separately from Gemini's direct interface. Both matter for different reasons: Overviews affect Google search behaviour directly; Gemini chat is a separate user session.

Claude and Copilot each drive under 1% of referral traffic today. Claude is used primarily for long-form work rather than product research, which limits referral generation. Copilot is embedded in Microsoft products and generates minimal standalone traffic.

The practical takeaway: optimise for ChatGPT first. Monitor whether Perplexity or Gemini gain share over time. Don't track AI visibility as one combined metric; you'll miss which platform is actually moving.

Key AI Visibility Metrics to Track

There are five metrics worth tracking consistently. Each tells you something different about where you stand.

AI Visibility Score is your overall coverage percentage across tracked prompts and platforms. It answers the most basic question: what share of the time does AI surface my brand when buyers are researching my category? Erlin's data from 500+ brands shows that 50% score below 35% prompt coverage across the four major AI platforms. That's the baseline most brands are starting from.

Prompt Coverage measures how many of the specific prompts you track actually return your brand in the answer. Track high-intent, purchase-adjacent prompts, not generic informational queries. The gap between how you perform on branded queries versus category-level queries is often where the real problem lives.

Share of Voice compares your prompt coverage against your competitors on the same set of prompts. A visibility score of 40% sounds fine until you learn your main competitor scores 75% on the same prompts. Share of Voice makes the competitive stakes visible.

Citation Rate tracks how often your brand is not just mentioned but specifically cited as a source in AI responses. There's a difference between an AI response that references your brand by name and one that cites your content as its source. Citation rate is the stronger signal.

Sentiment tracks how AI platforms describe your brand when they do mention you. This is where errors hide. Erlin's data shows that unmonitored brands take 67 days on average to detect AI errors: inaccurate pricing, wrong product descriptions, and outdated positioning. Monitored brands catch them in 14 days.

All five of these are standard in Erlin's dashboard. You can also track them manually, which the next section covers.

How to Track AI Visibility

There are two approaches: manual and automated. Manual tracking gives you a real feel for what AI actually says about your brand. Automated tracking makes it systematic, scalable, and actionable.

Manual Tracking

Start by building a prompt library. Write out 15–25 prompts that represent how a real buyer in your category would research a purchase decision.

For a SaaS brand, that might look like: "What's the best tool for [use case]?" or "Compare [your category] tools." For e-commerce, it might be product-type and specification-level queries.

Run those prompts in ChatGPT, Perplexity, Gemini, and Claude. Note whether your brand appears, what position it holds in the answer, how it's described, and which competitors are cited alongside you or instead of you.

This is useful for a one-time audit. It breaks down as an ongoing method because AI answers change constantly. High-traffic prompts churn at 23% month-over-month, according to Erlin's data. The snapshot you took last month may not reflect what buyers are seeing today.

Manual tracking also doesn't give you a structured way to act on what you find. You can see that you're missing from a set of prompts, but connecting that gap to a specific content fix requires another layer of analysis.

Automated Tracking with a Dedicated Tool

Automated tools run prompts on a schedule, track changes over time, benchmark against competitors, and surface where to act. Here's how to set it up using Erlin, one of the few tools designed to connect visibility tracking to content action rather than just monitoring.

Step 1: Choose your tracking platform

Look for a tool that integrates site audits, prompt libraries, competitor benchmarking, and GA/GSC sync. For teams that want to go from "here's where I'm missing" to "here's the content to fix it" without switching platforms, Erlin is built around that specific workflow.

Step 2: Sign up and enter your domain

Sign up at app.erlin.ai/get-started. Once your domain is entered, Erlin automatically captures brand context, pulls social signals, and prepares baseline insights in the background.

Step 3: Select competitors

Erlin suggests up to 5 competitors based on your domain and category. Accept, remove, or add competitors manually. Your AI visibility score means more when read against competitors than as a standalone number.

Step 4: Choose prompts to track

Erlin suggests high-intent prompts relevant to your category. These are queries AI platforms actually process when buyers are in research mode. You can add your own or adjust the suggestions based on how your buyers actually search.

Step 5: View your initial snapshot

After setup, you get an instant baseline: your AI Visibility Rank, Traffic Rank, and competitor comparison. This is your starting point and the number you measure all future changes against. Every optimisation decision you make from here on is relative to this number.

Step 6: Connect Google Analytics and Search Console

Linking GA and GSC unlocks AI vs. non-AI traffic breakdowns, conversion rate comparisons, and AI source distribution by platform. This is where the difference between AI traffic and everything else becomes visible for your brand specifically. The conversion difference tends to be significant; Erlin's client data shows AI traffic converting at 3–6x the rate of traditional organic traffic, a gap that only shows up when you're tracking both together.

Step 7: Explore the Prompts section

Under AI Visibility → Prompts, you see visibility trends and competitor comparisons at the individual prompt level. The Answer History tab shows actual text from recent ChatGPT or Perplexity responses: whether your brand was mentioned, whether it was cited, and what sources were referenced.

This is where tracking stops being a reporting exercise and becomes an input into what your content team works on next.

Understanding Your AI Visibility Tracker's Data

Getting the data is step one. The harder part is knowing what it's telling you and what to do about it.

Your overall AI Visibility Score tells you the size of the gap, not the cause. A score of 30% means you're appearing in 30% of tracked prompts. That's the outcome. The causes are almost always one of four things: low fact density, weak third-party validation, poor structured data, or stale content.

Erlin's research across 500+ brands identifies these four factors as explaining 89% of AI visibility variance. They're worth understanding individually because they point to different fixes.

Low fact density means AI doesn't have enough structured, extractable information about your brand to confidently include you. Brands with 0–2 verifiable facts score around 9% average AI coverage.

Brands with 9 or more score around 78%. Adding structured, factual content, such as pricing, features, integrations, and use cases, is the lever with the most direct impact on coverage.

Weak third-party validation means AI has limited signals from sources outside your own website. Only 32% of AI citations come from brand-owned websites; 68% come from third-party sources like Reddit, Wikipedia, review platforms, and YouTube.

If those sources don't contain substantive, accurate information about your brand, owned content alone won't close the gap. Reddit discussions drive a 3.4x higher citation rate than owned content. Wikipedia drives 2.9x. Review platforms like G2 and Capterra drive 2.6x.

Poor structured data means AI can't parse your pages reliably. Static HTML with schema markup achieves a 94% AI parsing success rate. Plain HTML without schema: 68%. JavaScript-rendered content: 23%.

PDF documents: 7%. If your most important pages are JavaScript-heavy or have no schema markup, you're invisible to AI at the parsing stage, regardless of how good your content is. Adding comparison tables, FAQ schema, and an llm.txt file typically drives a 28–34% coverage lift within 14–21 days.

Stale content means AI confidence scores decay over time. Brands updating content monthly see approximately 23% higher AI coverage than those with stale content.

The penalty compounds: brands lose roughly 1.8% AI coverage per month when content isn't refreshed. Content older than 24 months averages 18% coverage. Fresh content under three months averages 48%.

When you look at your AI visibility data, the prompt-level view is what connects these findings to specific actions. A prompt where you score well tells you what's working, the sources AI is pulling from, and the structure of the content it's citing.

A prompt where a competitor scores well and you don't is more instructive: it shows you exactly what AI considers authoritative for that question. That gap is where your next content brief comes from.

The monitoring function is just as important as the initial audit. AI responses change constantly. A citation you held last month can disappear if a competitor publishes something more structured, if a Reddit thread shifts the sentiment around your brand, or if your content ages past the threshold where AI treats it as current.

Tracking AI visibility isn't a one-time project. It's the same kind of ongoing infrastructure as Google Search Console; the baseline measurement that tells you whether your content programme is working.

Frequently Asked Questions

Does ranking on page one of Google mean I'll appear in AI answers?

No. Google rankings and AI citations have a weak correlation. AI platforms weigh entity clarity, content freshness, and third-party validation differently from how Google's algorithm works. A brand can rank first on Google and not appear in ChatGPT's answer to the same query. Treat AI visibility as a separate channel with its own measurement and optimisation logic.

How many prompts should I track to get a reliable AI visibility score?

Track at minimum 20–30 high-intent, purchase-adjacent prompts for your category. Volume matters less than relevance: the prompts should reflect how buyers in research mode actually talk about the problem your product solves. Erlin suggests prompts based on your domain and category, which is a faster way to build an accurate prompt library than starting from scratch.

How long does it take to see changes in AI visibility after making content updates?

Structured data changes (adding comparison tables, FAQ schema, or an llm.txt file) typically show measurable coverage lift within 14–21 days. Content freshness improvements take roughly the same time. Third-party validation (building Reddit presence, review platform coverage) takes longer: typically 30–60 days before the signal registers in AI citation patterns.

What's the difference between AI visibility and SEO?

SEO tracks ranking position in Google's results. AI visibility tracks citation rate in AI-generated answers. SEO is position-based; AI visibility is binary per prompt; you're either in the answer or you're not. The two channels use different signals, respond to different optimisation actions, and require separate measurements. Brands that treat AI visibility as an extension of their SEO programme miss the gap.

Can smaller brands realistically compete with larger ones in AI search?

Yes. Erlin's analysis of 500+ brands shows that smaller brands with strong entity context and structured data regularly outperform larger competitors in specific query categories. AI doesn't default to the biggest brand. It defaults to the most clearly defined one. A focused, well-structured brand with an active third-party presence can consistently outperform a Fortune 500 company on category-specific prompts.

What's the cost of not monitoring AI visibility?

Unmonitored brands take 67 days on average to detect errors in AI responses: wrong pricing, outdated features, inaccurate descriptions. Monitored brands catch them in 14 days. Beyond error detection, brands not tracking lose roughly 1.8% AI coverage per month to content staleness alone. The gap between AI visibility leaders and laggards is currently 9x and widening at 3.2% per month.

Start Your AI Visibility Audit

Get your AI Visibility Score across ChatGPT, Perplexity, Gemini, and Claude, and see exactly where you're missing against competitors.

Share

Related Posts

AI for Content Planning & Strategy: A Complete Guide

AI for content planning in 2026 means planning for AI search visibility, not just faster drafts. Five-layer workflow, what to automate, what stays human.

Generative Engine Optimization Checklist for SEO Teams

A generative engine optimization checklist for SEO teams: technical, structural, and measurement steps with ownership tags and a 30-60-90 sequence.

The Complete AEO Audit Checklist for AI Search Visibility (2026)

The complete 2026 AEO audit checklist for AI search visibility. 9 sections, prioritized fixes, and benchmark data from 500+ brands tracked across ChatGPT, Perplexity, Gemini, and Claude.