AI Visibility Score: What Is It & How to Check Yours

According to Erlin's 2026 State of AI Search report, 67% of marketing leaders don't know how to measure their AI visibility, and 58% say no one on their team owns it.

Meanwhile, AI search traffic converts 3–6x better than traditional channels, according to the same report. Every month without a baseline is a month of guessing.

This article explains what an AI visibility score is, how it's calculated, what affects it, and how to check yours.

What is an AI visibility score?

An AI visibility score is a composite metric that measures how consistently and favorably your brand appears in AI-generated answers.

When someone asks Perplexity, "Compare HubSpot vs Salesforce," the AI system pulls from a pool of sources and returns a small set of brands, usually two to four. Whether your brand makes that list, how often it does, and how it's described is what the AI visibility score tracks.

Unlike SEO rankings, which operate on a spectrum, AI visibility is closer to binary per query: you're either in the answer or you're not. Brands with high scores show up consistently. Brands with low scores appear occasionally or not at all.

What an AI visibility score looks like in practice

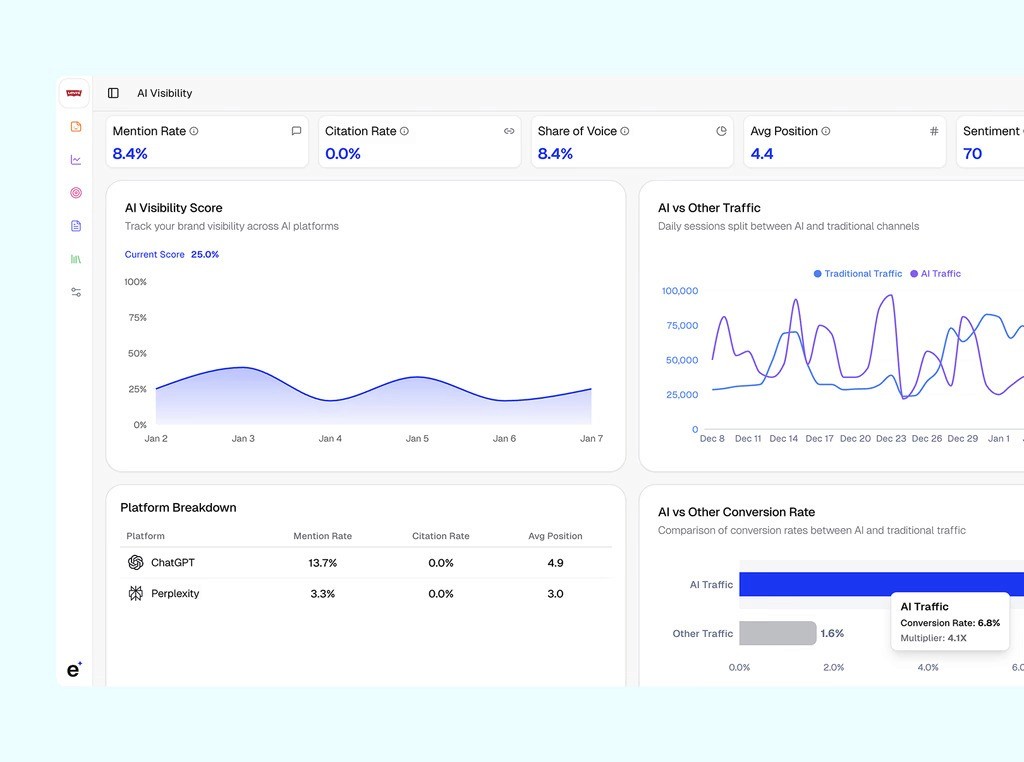

The screenshot below from Erlin's dashboard makes this concrete. The metrics across the top give you your headline numbers at a glance: Mention Rate (8.4%), Citation Rate (0.0%), Share of Voice (8.4%), Average Position (4.4), and Sentiment (70).

The AI Visibility Score chart shows the brand sitting at 25.0% over a week-long window, meaning it appeared in about one in four relevant AI-generated answers.

That's your baseline. The "AI vs Other Traffic" panel shows where sessions are actually coming from, and the Platform Breakdown table splits performance by platform: ChatGPT at 13.7% mention rate and 4.9 average position, Perplexity at 3.3% and 3.0.

What's useful about this view is how little you need to interpret. If your mention rate is 8% and a competitor's is 22%, you know where you stand.

The score doesn't explain why, that's what the platform's opportunity analysis is for, but it tells you clearly that there's a gap worth closing.

The metrics that matter now

The AI visibility score is an aggregate, which means it's worth understanding what goes into it. These are the individual metrics that matter in 2026.

Mention rate tracks how often your brand appears by name in AI-generated answers. If you run 100 relevant queries and your brand shows up in 8 of the responses, your mention rate is 8%. It's the clearest signal of raw presence.

Citation rate is narrower: it measures how often AI platforms link out to or explicitly source your content. A mention means the AI named you. A citation means it's treating your content as a reference. Citation rate tends to be lower, and it's often what separates brands that get traffic from AI search versus brands that just get mentioned.

Share of voice puts your numbers in a competitive context. The formula is simple: your mentions divided by total industry mentions times 100. If your brand is mentioned 18 times across 100 relevant responses and competitors are mentioned a combined 82 times, your AI share of voice is 18%. This is arguably the most actionable metric because it tells you not just where you are, but who's winning the space you're competing for.

Average position tracks where in the AI response your brand typically appears. First mention carries more weight than being buried in a list of six. Some tracking tools weight this into the overall score; others report it separately.

Sentiment score captures how AI describes your brand when it does mention you. A brand mentioned frequently but described as "expensive" or "limited" has a different challenge than one with positive, specific framing. Sentiment gaps often reveal content or reputation issues that raw mention counts don't surface.

Together, these five metrics paint a complete picture of AI search performance. Tracking one in isolation gives you an incomplete view.

How the AI visibility score is measured

AI visibility trackers operate like rank trackers but for generative AI. These trackers query LLMs with relevant prompts to detect brand appearances. Here’s how the process looks like:

Define target prompts based on user intents (e.g., "best [product] for [use case]") grouped by topics or buyer stages.

Run prompts repeatedly across AI platforms (ChatGPT, Perplexity, Gemini, Copilot) to capture variability in responses.

Analyze responses for metrics like mention frequency, position, citations, sentiment, and query breadth.

Normalize and weight components into a composite score (often 0-100), then benchmark against competitors for share of voice.

Update daily or weekly. Filter hallucinations via cross-validation and track trends over time.

Common Score Components

Most trackers use weighted factors normalized per industry.

Component | Weight Example | Description |

Mention Frequency | 40% | How often the brand appears across queries, adjusted for response length. |

Position & Prominence | 25% | Placement (e.g., top recommendation scores higher than footnotes). |

Citation Quality | 20% | Authority of sources cited (e.g., official sites over forums). |

Query Coverage | 15% | Breadth across diverse prompts and intents. |

A tool like Erlin runs thousands of purchase-intent prompts across ChatGPT, Perplexity, Gemini, and Claude. For each prompt, it captures: which brands were mentioned, in what order, with what framing, and whether content was linked or cited.

That raw data gets aggregated into scores for each metric, then rolled up into the overall AI visibility score.

The score itself is typically expressed on a 0–100 scale. Erlin's research defines the maturity tiers this way:

AI Invisible (under 20%): minimal appearance in AI answers; limited structured data and weak citation signals

AI Fragile (20–40%): inconsistent inclusion, concentrated in a narrow set of queries

AI Present (40–60%): regular appearance in core category queries; structured content in place

AI Preferred (60–80%): consistent inclusion in high-intent and comparison queries; strong authority signals

AI Dominant (80%+): frequent top inclusion across core and adjacent categories; sustained citation authority

Most brands that have never optimized for AI search land in the Invisible or Fragile tier. The prompt library matters as much as the measurement itself.

Erlin's platform suggests high-intent prompts relevant to your category: queries that mirror what buyers actually type when they're in research mode, not generic brand awareness questions.

Factors that affect your AI visibility score

Content quality and fact density

AI systems don't evaluate brand holistically. They evaluate specific factual claims they can extract, verify, and reuse in an answer.

Erlin's analysis of 500+ brands found that brands with 9+ structured facts in their content coverage (pricing, features, use cases, technical specs, comparison data) achieve 78% AI coverage. Brands with 0–2 facts achieve just 9%.

Marketing language doesn't help. Claims like "industry-leading platform" or "the most innovative solution" give AI nothing to work with. Specific, extractable facts, such as integration counts, pricing tiers, setup time, customer outcomes, etc., are what get cited.

Entity coverage and clarity

AI systems need to understand who you are before they can recommend you. Entity coverage refers to how clearly and consistently your brand identity is defined across the web: your website, Wikipedia, third-party reviews, press mentions, and social profiles. Inconsistent or incomplete signals lead to omission.

This is different from SEO. You don't need the most backlinks. You need the clearest signal. Erlin's data found that smaller brands with strong entity context routinely outperform Fortune 500 companies in specific query categories. AI doesn't default to the biggest brand. It defaults to the clearest one.

Structured data and technical signals

How your content is formatted affects whether AI can read it at all. Erlin's research shows that static HTML with schema markup achieves a 94% AI parsing success rate. JavaScript-rendered content drops to 23%. PDFs land at 7%.

Three specific formats show consistent coverage lifts: comparison tables (about 34% higher coverage within two weeks), llm.txt files (32% lift), and FAQ schema markup (28% lift over three weeks). These aren't cosmetic improvements; they directly affect whether AI can extract your content as a citable source.

Citation history and momentum

Third-party validation is the biggest lever most brands aren't using. Erlin's research found that third-party sources drive 68% of all AI citations. Reddit discussions carry a 3.4x citation lift over owned content alone. Wikipedia articles carry 2.9x. Review platforms and affiliates come in at 2.6x.

Your own website content is the baseline. Third-party validation is what separates brands that appear occasionally from brands that appear consistently.

Content freshness

AI systems continuously re-evaluate brand information for recency. Brands that update content monthly maintain roughly 23% higher AI representation than those that don't. Content under 3 months old has 48% average coverage. Content older than 24 months drops to 18%.

The staleness penalty is estimated at about 1.8% AI coverage per month of inactivity. That's not catastrophic month to month, but it compounds.

Competitive landscape

AI typically cites 2–3 brands per response, which creates a winner-take-most dynamic. Your score isn't static. It shifts as competitors improve their visibility.

Monitoring share of voice against specific competitors matters because a rising competitor in your category can suppress your appearances even if your absolute content quality hasn't changed.

How to check your brand's AI visibility score

The process is quicker than you'd think. Here's how it works using Erlin, one of the few tools that ties visibility tracking directly to content action, rather than just monitoring.

Step 1: Pick your tracking platform

Look for a tool that covers multiple AI platforms at once, supports competitor benchmarking, integrates with GA/GSC, and includes a prompt library for your category.

For teams that want to move from "here's where I'm falling short" to "here's how to fix it" without jumping between tools, Erlin is built exactly for that. The gap analysis flows straight into an action center.

Step 2: Sign up and add your domain

Head to app.erlin.ai/get-started. Once you enter your domain, Erlin automatically pulls brand context, gathers social signals, and builds baseline insights in the background. No manual configuration needed.

Step 3: Add your competitors

Erlin recommends up to five competitors based on your domain and category. Keep the suggestions, replace them, or add your own. Your AI visibility score is most useful in context: knowing you're at 25% hits differently when your closest competitor is sitting at 61%.

Step 4: Select the prompts you want to track

Erlin surfaces high-intent prompts relevant to your category; the kinds of queries buyers actually use when they're actively researching. You can refine the list, add industry-specific terms, or zero in on the prompts where beating competitors matters most.

Step 5: Review your baseline snapshot

Once setup is complete, you get an instant read: your AI Visibility Score, Traffic Rank, and a side-by-side competitor comparison. The dashboard breaks down platform-level data by mention rate, citation rate, and average position across AI platforms like ChatGPT and Perplexity.

The "AI vs Other Traffic" panel tracks how AI referral traffic stacks up against traditional sessions over time. The "AI vs Other Conversion Rate" panel tends to be where eyebrows go up. AI traffic converting at 4.6% compared to 0.6% from other channels is a pattern Erlin sees consistently.

This is your baseline. Every future change, whether it's a content update, schema fix, or citation campaign, gets measured against it.

How to improve your AI visibility score

1. Audit your current AI presence

Before optimizing anything, understand what AI is already saying about your brand. Erlin offers a free AI visibility audit that shows how AI platforms currently describe your brand, what they get wrong, and where you're absent from conversations where competitors appear.

Run the brand audit checklist from Erlin's report on:

Fact density (pricing accessible? features in scannable formats? competitive positioning explicit?)

Third-party validation (reviews on major platforms? Reddit presence? Wikipedia mentions?)

Structured data (llm.txt file? FAQ schema? schema.org markup?)

Each "no" is a concrete gap with an estimated coverage impact.

2. Identify citation gaps

Pull your competitor comparison data and look for specific prompts where competitors appear and you don't. Those are your highest-priority gaps: the queries where buyer intent is high, and you're currently invisible.

Citation gaps usually fall into two buckets: content gaps (you don't have content that addresses this query) and structural gaps (you have content, but AI can't parse or surface it). They require different fixes.

3. Create entity-rich content

Rewrite key pages around extractable facts. Every page where AI might pull a response should have: specific pricing or pricing ranges, clear feature descriptions with technical details, use cases tied to real scenarios, and comparison information that makes your differentiation explicit.

If your homepage says "powerful, flexible platform," that's not extractable. If it says "integrates with 200+ tools, setup under 30 minutes, used by 5,000+ teams," that is.

4. Optimize technical signals

Implement FAQ schema on question-based pages. Add an llm.txt file that summarizes your brand's key facts in a format AI crawlers can read easily. Make sure your most important content — pricing, features, comparison pages — is available in static HTML, not JavaScript-rendered. Check that schema.org Product or Organization markup is in place on key pages.

Erlin's data suggests that addressing all five structural signals moves brands from 23–35% coverage to 60–80% coverage. That's not a marginal improvement.

5. Build authoritative third-party validation

This takes longer than the technical fixes, but it has the highest impact. Pursue verified reviews on G2, Capterra, and industry platforms. Get into relevant Reddit discussions — not promotional, genuinely helpful answers to questions in your category. Aim for coverage in industry publications and blogs that AI systems treat as authoritative sources.

Erlin tracks citation shifts across third-party sources and alerts teams when brand mentions or AI citation positions change, so you can see the impact of this work in near-real-time rather than waiting for quarterly reviews.

6. Monitor and iterate

AI visibility decays without maintenance. Brands that go silent lose roughly 1.8% AI coverage per month. Monitored brands catch errors in about 14 days; unmonitored brands take an average of 67 days, by which point the damage is compounding.

Erlin's platform runs continuous prompt tracking and flags when citation positions shift, content goes stale, or a competitor surges. That's the difference between managing AI visibility as a channel and discovering six months later that you've lost ground you can't easily recover.

Frequently asked questions

What is an AI visibility score?

An AI visibility score is a composite metric, typically expressed on a 0–100 or percentage scale, that measures how often and how favorably your brand appears in AI-generated answers across platforms like ChatGPT, Perplexity, Gemini, and Claude. It aggregates mention rate, citation rate, share of voice, average position, and sentiment into a single number you can track over time.

How is an AI visibility score different from SEO rankings?

SEO rankings measure where a page appears in a list of results. AI visibility measures whether your brand is recommended inside a synthesized answer. There's no list, just the brands AI decides to include. Google ranking and AI citation have a weak correlation, according to Erlin's tracking of 500+ brands. A brand can rank first on Google for a query and still not appear in ChatGPT's answer to the same question.

How often should I track my AI visibility score?

Monthly is the minimum for most brands. Weekly tracking is worth the effort if you're actively running content or technical improvements and want to see what's moving your score. Erlin runs continuous tracking and surfaces changes automatically, so you don't need to manually check dashboards.

Can smaller brands realistically compete in AI search?

Yes. And this is one of the more interesting things about AI search versus traditional SEO. Erlin's analysis found that smaller brands with strong entity context and structured data regularly outperform larger competitors in specific query categories.

How long does it take to see improvement in AI visibility?

Most brands see measurable movement within 60–90 days of implementing structural changes (structured data, fact-dense content, schema fixes). Citation-based improvements from third-party validation compounds more slowly, typically 4–6 months before the pattern becomes clear. The brands that get there fastest treat AI visibility the same way they'd treat any other measurable channel: with a baseline, a hypothesis, and a feedback loop.

What's a good AI visibility score to aim for?

Erlin's maturity model puts brands in five tiers based on coverage percentage. Getting from "AI Invisible" (under 20%) to "AI Present" (40–60%) is achievable for most brands within a quarter of focused effort. Reaching "AI Preferred" (60–80%) takes sustained work across content, technical, and third-party signals. Only about 15% of brands currently reach the top tier "AI Dominant" at 80%+ coverage, and they tend to have a head start.

How do I start tracking my AI visibility score?

The fastest starting point is Erlin's free AI visibility audit. Setup takes minutes: enter your domain, select competitors, choose prompts to track, and get your initial baseline across AI Visibility Score, Traffic Rank, and competitive comparison. That baseline is your starting number against which everything else gets measured.

Share

Related Posts

The Real Cost of Ignoring AI Search in 2026

AI search converts 5x better than organic. Brands not cited are losing pipeline they can't see. Here's the data on what AI invisibility actually costs in 2026

How to Build Your AI Brand Voice (That Sounds Like You)

How to build an AI brand voice that AI search engines can learn from and reproduce accurately, including entity definition, structured data, prompt auditing, and refresh cadence.

AI SEO Optimization: Your Complete Guide for 2026

AI SEO optimization gets your brand cited in AI-generated answers. This 2026 guide covers structure, structured data, source authority, and citation tracking, with data from 500+ brands.