AI Visibility Analytics: The Metrics for AI Search Success (2026)

You ranked #1 on Google for three years. Then traffic started dropping, quietly, gradually.

Not because your rankings slipped. They didn't. A growing share of your potential buyers stopped clicking search results altogether. They asked ChatGPT instead.

This is the disruption most marketing teams are still catching up to. The brands showing up inside those AI answers are playing by completely different rules than the ones your SEO dashboard was built to track.

AI visibility analytics is what helps you understand those rules and figure out whether you're winning or losing by them.

What Is AI Visibility Analytics?

AI visibility analytics tracks how often your brand is cited, mentioned, or recommended in AI-generated answers across platforms like ChatGPT, Perplexity, Gemini, and Claude.

It works like SEO analytics, but for a world where buyers ask ChatGPT "what's the best [product category] for [use case]" instead of Googling it.

Where traditional SEO analytics tracks keyword rankings, click-through rates, and organic traffic, AI visibility analytics tracks whether your brand appears in the answer at all.

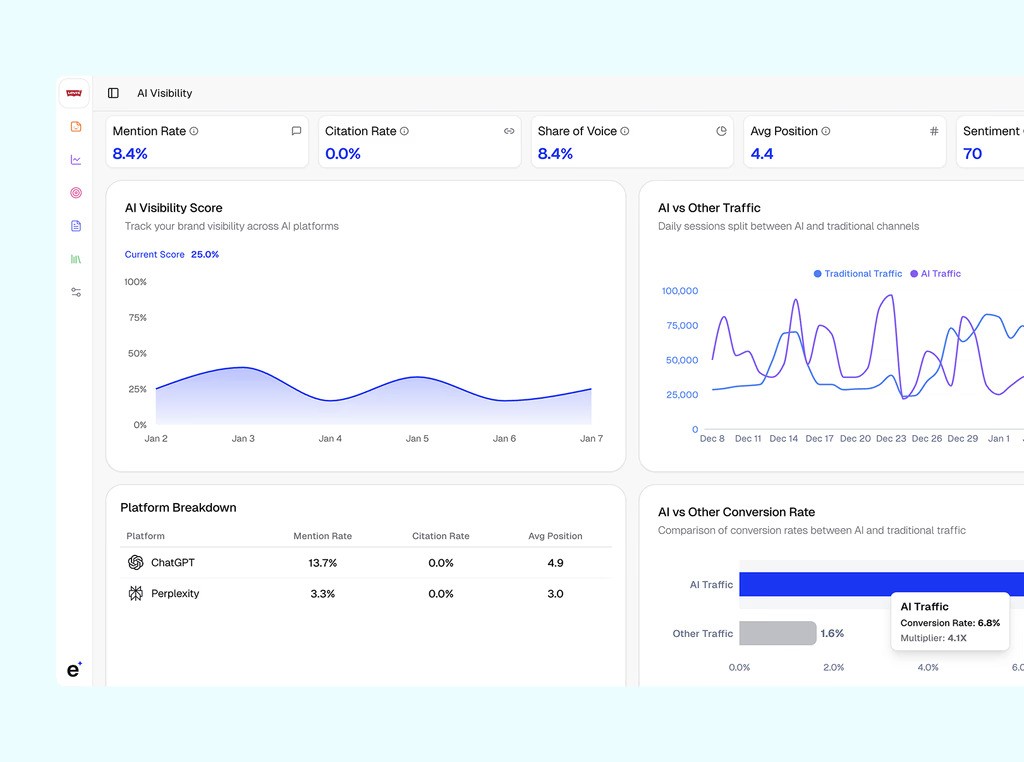

For example, an AI visibility analytics dashboard might show how often a brand appears across AI platforms and how that exposure changes over time. Erlin’s analytics dashboard below illustrates this with a metric called the AI Visibility Score, which summarizes a brand’s overall presence in AI-generated answers across tracked prompts and platforms.

In this example, the brand has a Visibility Score of 25%, meaning it turns up in about one in four AI responses the analysis tracked. The trend chart shows how that number shifts over time, useful for spotting whether the brand is becoming more or less present in AI-generated answers.

The dashboard puts the AI mention rate at 8.4%: roughly 8 in every 100 AI responses for the tracked prompts include this brand. Share of voice is also 8.4% of the total AI conversation compared to competitors.

Average ranking position clocks in at 4.4, so when AI systems run through company lists, this brand typically appears around fourth. Sentiment sits at 70, a measure of how positive or negative its mentions are compared to competitors.

Why AI Visibility Tracking Matters for Your Business in 2026

44% of AI search users say it's their primary source for product discovery, exceeding traditional search at 31%. (McKinsey AI Discovery Survey, October 2025)

That's a different buyer category. Someone who already got a recommendation, already processed their options, and clicked through, ready to act. The research and the convincing happened before they ever saw your website.

Most marketing teams don't know whether they're getting those buyers. Erlin surveyed 200+ marketing leaders in 2026: 67% don't know how to measure their AI visibility, and 58% say no one on their team owns it. Only 18% have an active AI visibility strategy (Erlin survey, 200+ marketing leaders, 2026).

There is a measurable first-mover advantage here. Erlin's data shows the gap between AI visibility winners and losers is 9x today and is widening by 3.2% every month (Erlin data, 2026).

The cost of inaction is equally measurable. Brands lose roughly 1.8% AI coverage per month when their content isn't refreshed (Erlin data, 2026). Small in isolation. Over a year, it's a significant gap between you and a competitor maintaining their content consistently.

There's also an indirect effect on branded search that often goes unmeasured. When ChatGPT mentions your brand in an answer, a portion of those users then search your name directly on Google.

AI doesn't always send a referral click, but it's influencing your organic funnel. Brands that track both together catch the correlation early.

The Real Challenges in Measuring AI Visibility

AI visibility measurement is still evolving. There's no Search Console equivalent for ChatGPT. No industry-wide benchmarks yet. Tools use different methodologies, which makes cross-platform comparisons unreliable. Three specific things to know before you start:

AI responses are probabilistic. Run the same prompt twice, and you'll get different answers. Visibility data points toward trends, not fixed positions.

Attribution is incomplete. GA4 often misclassifies AI chatbot traffic as "direct" or a generic referral. Custom channel groups and UTM parameters help, but they don't capture zero-click interactions, the queries where the AI answered fully, and the user never needed to visit any website.

Ownership is genuinely unclear. AI visibility sits at the intersection of SEO, content, brand, and growth, which means it often falls between all of them. Without a named owner and a regular review cadence, measurement doesn't compound into anything useful.

None of this means you can't measure AI visibility meaningfully today. It means treating the data as directional intelligence, pairing it with branded search volume and AI referral conversion rates, and not expecting it to behave like a stable SEO metric yet.

Key Metrics in AI Visibility Analytics

Not every number in an AI visibility dashboard deserves equal attention. Here's what actually matters.

AI Visibility Score

A composite metric, typically the percentage of high-intent prompts in your category where your brand appears. Erlin uses a 0–100 scale. A score of 66.7% means the brand appeared in roughly two-thirds of tracked queries. This is your north star metric. It tells you, in aggregate, how reliably you show up when buyers are asking questions in your space.

Share of Voice

Your brand's mentions divided by total industry mentions across the same prompt set. If your category has five main players and AI mentions you in 30% of responses, your share of voice is 30%. This puts your score in a competitive context, usually more useful than an absolute number on its own.

Mention Rate vs. Citation Rate

Related, but different. A mention means your brand name appeared in the AI's answer text. A citation means your website was linked as a source. Citation rates tend to be much lower, around 3.7% in Erlin's data, but they matter more for direct traffic. Mention rate is more useful for brand awareness and the indirect branded-search effect.

Average Position

When your brand is mentioned, is it the first name in the answer or the fourth? Being mentioned first correlates with higher click probability and stronger brand association. Position tracking tells you whether you're getting cited as the primary recommendation or as an afterthought.

Sentiment

AI systems don't just name brands, they describe them. "Great for enterprise teams" and "works for basic use cases" are not the same recommendation. Sentiment tracking tells you how AI is framing your brand, which matters for conversions and for catching reputation issues before they compound.

AI Referral Conversion Rate

This requires connecting your AI visibility tool to GA4 or Google Search Console, but it's worth the setup. AI traffic converts at 4.4x the rate of traditional organic traffic (Erlin client data, 2026). That gap is why AI visibility deserves its own measurement infrastructure.

Platform Breakdown

Erlin's analysis of referral sessions across platforms found ChatGPT drives the overwhelming majority of AI referral traffic. That concentration means optimizing for ChatGPT is the priority for most brands right now, while monitoring whether other platforms gain meaningful share over time.

How to Set Up AI Visibility Analytics Tracking

The setup is faster than most people expect. Here's how it works using Erlin, one of the few tools designed to connect visibility tracking to content action, rather than just monitoring.

Step 1: Choose your tracking platform

Look for a tool that integrates site audits, prompt libraries, competitor benchmarking, and GA/GSC sync. For teams that want to go from "here's where I'm missing" to "here's the content to fix it" without switching platforms, Erlin is built around that specific workflow.

Step 2: Sign up and enter your domain

Sign up at app.erlin.ai/get-started. Once your domain is entered, Erlin automatically captures brand context, pulls social signals, and prepares baseline insights in the background.

Step 3: Select competitors

Erlin suggests up to 5 competitors based on your domain and category. Accept, remove, or add competitors manually. Your AI visibility score means more when read against competitors than as a standalone number.

Step 4: Choose prompts to track

Erlin suggests high-intent prompts relevant to your category. These are queries AI platforms actually process when buyers are in research mode.

Step 5: View your initial snapshot

After setup, you get an instant baseline: your AI Visibility Rank, Traffic Rank, and competitor comparison. This is your starting point and the number you measure all future changes against.

Step 6: Connect Google Analytics and Search Console

Linking GA and GSC unlocks AI vs. non-AI traffic breakdowns, conversion rate comparisons, and AI source distribution by platform. This is where the difference between AI traffic and everything else becomes visible for your brand specifically.

Step 7: Explore the Prompts section

Under AI Visibility → Prompts, you see visibility trends and competitor comparisons at the individual prompt level. The Answer History tab shows actual text from recent ChatGPT or Perplexity responses: whether your brand was mentioned, whether it was cited, and what sources were referenced.

AI Visibility Analytics in Practice

Here's what the metrics look like when they turn into actual outcomes:

TrustEvals.ai: 40% Increase in AI-Driven Traffic

TrustEvals.ai provides AI auditing and governance services (SOC 2, NIST AI RMF compliance, the full stack).

As buyers shifted toward AI search, prospective clients started asking questions like "what is an AI risk audit?" and "how do you apply the NIST AI Risk Management Framework?" Despite deep expertise, TrustEvals was consistently missing from the answers.

The problem wasn't content quality. It was extractability. Long-form whitepapers were hard for AI systems to summarize or cite. Services were written to inform rather than to be categorized as executable solutions.

The technical vocabulary they owned, such as "model drift," "algorithmic accountability," "risk thresholds", etc., wasn't structured in a way AI could reuse consistently.

Using Erlin, they restructured compliance documentation into concise, machine-readable formats. They clarified service definitions so AI could categorize their work as actionable solutions.

They published authoritative standalone definitions for governance terms that AI systems started reusing when answering queries in that space.

The result: 40% more traffic from AI platforms. Users who arrived already understood compliance frameworks and were closer to making a vendor decision. The AI citation did qualification work before the click.

iRESTORE: 6.5x AI Traffic Growth in 90 Days

iRESTORE sells laser hair growth devices. Traditional SEO and paid channels performed well. But when buyers asked AI platforms "best laser hair growth device" or "does this actually work for hair loss," iRESTORE wasn't in the answers and had no way to know it.

They used Erlin to build that infrastructure. First: ensuring AI systems could consistently identify what the product is, what problems it solves, and how it differs from alternatives.

Second: restructuring content for AI extraction rather than human skimming. Third: prioritizing schema and structured data to reduce ambiguity.

Within 90 days, AI referral traffic grew 6.5x. Conversion rate from AI sources was 3x the site average.

The data also revealed that 94% of AI traffic came from ChatGPT, which let the team focus optimization on the one platform actually driving revenue, rather than spreading effort evenly across five.

Optimization Strategies That Move the Numbers

Tracking is only useful if it leads somewhere. These are the levers that actually drive results.

Build fact density into your content

Brands with 9+ structured attributes see 78% AI coverage. Brands with 0–2 facts see 9%. Make sure your pricing, features, use cases, and differentiators are stated explicitly on your key pages (Erlin data, 2026).

Earn third-party citations

68% of the sources AI systems draw on are third-party, not brand-owned. Reddit discussions produce 3.4x higher citation rates than owned content. Wikipedia produces 2.9x. Review platforms like G2 produce 2.6x (Erlin data, 2026). Earning genuine external mentions is a direct input to AI coverage.

Add structured data

An llm.txt file, FAQ schema, and comparison tables each drive 28–34% higher AI coverage within 14–21 days. Static HTML with schema markup parses at 94% success rate for AI systems. JavaScript-rendered content parses at 23% (Erlin data, 2026).

Refresh content monthly

Brands updating monthly see ~23% higher AI representation than inactive brands. Content under 3 months old averages 48% AI coverage. Content over 24 months old averages 18% (Erlin data, 2026). The decay is easy to ignore in the short term, but it compounds.

Catch misrepresentation early

Monitored brands detect AI errors in 14 days on average. Unmonitored brands take 67 days, nearly 5x longer (Erlin data, 2026). Outdated pricing, wrong feature descriptions, and incorrect comparisons reduce citation probability and send buyers in the wrong direction.

Assign an owner

Someone needs to own AI visibility the way someone owns SEO or paid media. Monthly review. Named owner. Visibility score tracked alongside other marketing KPIs. Without that, measurement stays a one-time exercise rather than a compounding capability.

Frequently Asked Questions

What Is the Difference Between AI Visibility Analytics and Traditional SEO Analytics?

Traditional SEO analytics tracks keyword rankings, organic traffic, impressions, and click-through rates. AI visibility analytics measures how often your brand appears in AI-generated answers. A brand can rank #1 on Google and still be invisible in ChatGPT's answer to the same question. The two systems use different logic, and a single analytics stack can't cover both.

How Often Should I Track AI Visibility Metrics?

Weekly or biweekly is the practical minimum. AI responses vary run-to-run, so individual data points are noisy. You need trends over time. Erlin lets you schedule prompts to run automatically, which makes consistent tracking much easier than manual spot checks.

Does Domain Authority Matter for AI Visibility?

Less than you'd expect. Erlin's research found that smaller brands with a domain authority under 20 consistently outperform Fortune 500 companies in specific query categories. A focused brand with strong entity context and structured content outperforms a larger competitor with broader but shallower coverage.

Why Does My AI Traffic Look Small in GA4 Even If I'm Being Cited?

Two reasons. First, many AI interactions are zero-click. The user got their answer without visiting anyone's site. Second, GA4 often misclassifies traffic from AI platforms as "direct" or generic referral. Setting up custom channel groups for known AI sources (chat.openai.com, perplexity.ai, gemini.google.com) isolates this traffic more accurately.

How Long Does It Take to See Results from AI Visibility Optimization?

Most brands see measurable improvements within 60–90 days of consistent optimization. Citation growth typically compounds over 4–6 months as AI systems recognize topical authority. Structural changes, such as an FAQ schema or an llm.txt file, can show impact faster. Erlin's data shows 14–21 days for some structured data changes to register (Erlin data, 2026).

What Is the First Thing to Do If I've Never Tracked AI Visibility Before?

Get a baseline. Sign up at app.erlin.ai/get-started, enter your domain, and let the platform run its initial scan. Within 10–15 minutes, you'll have an AI Visibility Rank, a competitor comparison, and a list of content gaps. Without that baseline, you're optimizing without knowing your starting point.

Get Your AI Visibility Score

See exactly where your brand appears, and where competitors are getting cited instead.

Get Your Free Audit Now → app.erlin.ai/get-started

Share

Related Posts

Generative Engine Optimization Trends for 2026

7 GEO trends reshaping AI search visibility in 2026, with data on citations, structured data, and what actually drives brand coverage.

15 Generative Engine Optimization Best Practices Backed by Latest Research

Generative engine optimization best practices backed by 2026 research. 15 tactics to get your brand cited by ChatGPT, Perplexity, and Google AI Overviews.

AI for Content Planning & Strategy: A Complete Guide

AI for content planning in 2026 means planning for AI search visibility, not just faster drafts. Five-layer workflow, what to automate, what stays human.