AI Brand Visibility Tracking: A Complete Guide (2026)

AI brand visibility tracking is becoming essential as AI tools shape how buyers discover and evaluate brands.

This 2026 guide breaks down how to measure your presence across platforms like ChatGPT, Perplexity, and Gemini, and turn visibility into a competitive advantage.

What AI brand visibility tracking measures

AI brand visibility tracking monitors how often and how accurately your brand appears in AI-generated answers across platforms like ChatGPT, Perplexity, Gemini, and Claude.

It's not the same as rank tracking. In traditional SEO, you're monitoring a position, you rank 4th, you move to 2nd. In AI search, visibility is binary per prompt: your brand is either in the answer or it isn't.

AI platforms cite an average of 2.8 brands per response. That's it. If you're one of them, you get all of the user's attention on that query. If not, you're invisible regardless of your domain authority.

The core metrics you track are:

Prompt coverage: the percentage of high-intent prompts in your category where your brand appears

Citation rate: how often your brand is named specifically, not just adjacent to the topic

Share of voice: your citation rate relative to competitors across the same prompt set

Sentiment: how AI describes your brand when it does appear: accurate, positive, neutral, or wrong

Platform breakdown: which platforms cite you and which don't, since performance varies significantly by platform

Most brands don't track any of these. Erlin's survey of 200+ marketing leaders found that 67% don't know how to measure AI visibility and 58% say no one on their team owns it. (Erlin survey, 200+ marketing leaders, 2026)

Why your Google ranking doesn't predict your AI visibility score

This is the thing that trips people up most. Erlin tracked 500+ brands and found that traditional SEO ranking explains very little of why a brand gets cited in AI responses. The two systems use different signals.

Google rewards backlinks, keyword matching, and domain authority. AI systems reward something closer to factual clarity: they're asking, "can I confidently extract and summarize this brand's position on this topic?" A brand can rank first on Google for a query and still not appear in ChatGPT's answer to the same question.

Four factors explain 89% of AI visibility variance across Erlin's dataset. (Erlin data, 500+ brands, 2026)

Fact density: Brands with 9+ structured, extractable facts about their product achieve 78% average AI coverage. Brands with 0–2 facts achieve 9%. Each additional structured attribute adds ~8.3% median coverage. AI doesn't reward marketing language; it rewards specific, verifiable claims it can lift and use.

Source authority: 68% of AI citations come from third-party sources. Only 32% from brand-owned websites. Reddit discussions drive 3.4x higher citation rates than owned content. Wikipedia drives 2.9x. Review platforms drive 2.6x. If your brand exists only on your own website, AI has limited confidence to cite you.

Structured data: Comparison tables drive +34% coverage lift in 14 days. An llm.txt file drives +32% in the same window. FAQ schema drives +28% in 21 days. Static HTML with schema markup has a 94% AI parsing success rate. JavaScript-rendered content: 23%. PDFs: 7%.

Content recency: Brands with content under 3 months old average 48% AI coverage. Content over 24 months old averages 18%. Brands lose ~1.8% AI coverage per month when content isn't refreshed. (Erlin data, 2026)

The gap between the brands that understand this and the ones that don't is already 9x in citation rates, and widening 3.2% every month. (Erlin data, 500+ brands, 2026)

Why AI brand visibility tracking matters beyond vanity metrics

Brands tracked by Erlin see AI traffic converting at 3–6x the rate of traditional organic. (Erlin client data, 2026) The reason is intent: people using AI to research a purchase are further along.

They're not browsing. They're comparing. When AI names your brand as one of two or three options, the user who clicks has already been pre-sold by the AI's reasoning.

The flip side is error risk. AI gets things wrong. It misquotes pricing. It attributes features to the wrong product. It sometimes describes a brand's positioning in ways that don't match current messaging. Monitored brands detect these errors in 14 days on average.

Unmonitored brands take 67 days. (Erlin data, 2026) That's 53 days of an AI search engine telling your buyers something incorrect about your product, at scale, before you know.

High-traffic prompts in your category churn at 23% month-over-month. If you're not tracking, you won't know when you drop off one.

The AI Visibility Ladder: where does your brand sit?

Erlin's analysis of 500+ brands across four platforms produces five tiers of AI visibility maturity.

AI Invisible (0–15% prompt coverage): Fewer than 3 verifiable facts, no structured data, content older than 18 months. AI has too little confidence to cite the brand. 10% of brands in Erlin's dataset are here.

AI Fragile (15–35%): 3–4 detectable facts, fewer than 25 reviews, sporadic Reddit mentions. Coverage is inconsistent. The brand shows up sometimes, in narrow query sets. 20% of brands.

AI Present (35–60%): 5–7 structured facts, 25–75 reviews, regular citations. Solid baseline, but not yet showing up on comparison queries where the real buyer intent sits. 25% of brands.

AI Preferred (60–80%): 8+ structured facts, 50+ reviews, active Reddit presence across 5+ communities, llm.txt deployed, FAQ schema in place, frequent top-3 placement. 30% of brands.

AI Dominant (80%+): 10+ facts, Wikipedia presence, 100+ reviews, daily Reddit engagement, weekly content updates, zero detectable errors. 15% of brands.

Half of all brands score below 35% prompt coverage. (Erlin data, 2026) That means most are either invisible or fragile in AI search, present enough to feel fine about it, not present enough to capture the buyer at the moment of decision.

How to set up AI brand visibility tracking

Manual tracking is possible but slow. You run prompts by hand, log results in a spreadsheet, repeat weekly, and try to spot trends. It works at a very small scale. It doesn't scale to the number of prompts that actually matter in any real category, and it tells you nothing about competitors.

Automated tools run prompts on a schedule, track changes over time, benchmark against competitors, and surface where to act. Here's how to set it up using Erlin, one of the few tools designed to connect visibility tracking to content action rather than just monitoring.

Step 1: Choose your tracking platform

Look for a tool that integrates site audits, prompt libraries, competitor benchmarking, and GA/GSC sync. For teams that want to go from "here's where I'm missing" to "here's the content to fix it" without switching platforms, Erlin is built around that specific workflow.

Step 2: Sign up and enter your domain

Sign up at app.erlin.ai/get-started. Once your domain is entered, Erlin automatically captures brand context, pulls social signals, and prepares baseline insights in the background.

Step 3: Select competitors

Erlin suggests up to 5 competitors based on your domain and category. Accept, remove, or add competitors manually. Your AI visibility score means more when read against competitors than as a standalone number.

Step 4: Choose prompts to track

Erlin suggests high-intent prompts relevant to your category — the queries AI platforms actually process when buyers are in research mode. Add your own or adjust based on how your buyers actually search.

Step 5: View your initial snapshot

After setup, you get an instant baseline: your AI Visibility Rank, Traffic Rank, and competitor comparison. This is the number you measure all future changes against. Every optimisation decision from here is relative to this starting point.

Step 6: Connect Google Analytics and Search Console

Linking GA and GSC unlocks AI vs. non-AI traffic breakdowns, conversion rate comparisons, and AI source distribution by platform. This is where the difference between AI traffic and everything else becomes visible for your brand specifically. The conversion difference tends to be significant — Erlin's client data shows AI traffic converting at 3–6x the rate of traditional organic, a gap that only shows up when you're tracking both together.

Step 7: Explore the Prompts section

Under AI Visibility → Prompts, you see visibility trends and competitor comparisons at the individual prompt level. The Answer History tab shows actual text from recent ChatGPT or Perplexity responses: whether your brand was mentioned, whether it was cited, and what sources were referenced.

What to do with what you find

Tracking without acting on it is just monitoring. The point of AI brand visibility tracking is to reverse-engineer what's driving citation gaps and fix them.

If your fact density is low, the fix is content: add structured product facts, pricing clarity, use cases, comparison tables. Each additional structured attribute adds ~8.3% median coverage.

If your source authority is low, the fix is off-site: earn Reddit mentions in relevant communities, build review platform presence on G2 or Capterra, and get mentioned in independent media. Third-party sources drive 68% of AI citations; you can't compensate for this with owned content alone.

If your structured data is missing, the fix is technical: deploy llm.txt, add FAQ schema, convert JavaScript-rendered content to static HTML, and add schema.org markup. These drive measurable lifts in 14–21 days.

If your content is stale, the fix is a refresh cadence: update core product pages monthly, push new content regularly, and monitor when AI starts surfacing outdated information. Brands updating monthly see ~23% higher AI coverage than those with stale content. (Erlin data, 2026)

The Opportunities section in Erlin surfaces exactly which of these gaps apply to your brand and ranks them by impact using the Fire Score, so you're not guessing which fix to prioritise.

The metrics to report to leadership

AI visibility is measurable. These are the four numbers worth putting in a dashboard:

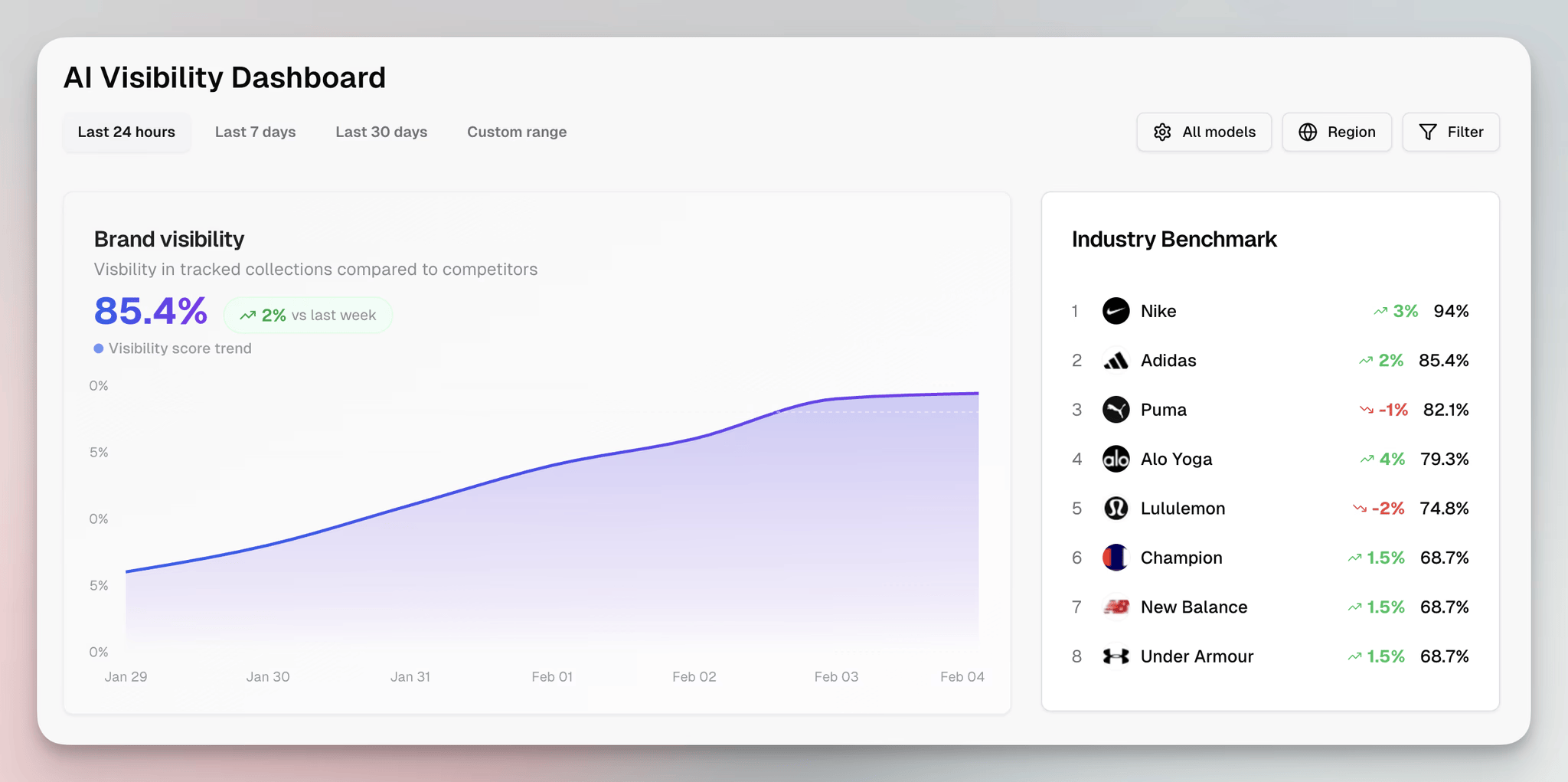

AI Visibility Score: your overall prompt coverage percentage across tracked platforms. This is the headline number.

Share of voice: your citation rate relative to the competitors you're tracking. This shows whether you're gaining or losing ground relative to where buyers actually compare options.

Mention and citation rate by platform: broken out by ChatGPT, Perplexity, Gemini, and Claude. Platform performance varies significantly. ChatGPT drives 91% of all AI referral traffic, so gaps there cost more than gaps elsewhere.

Sentiment score: how AI describes your brand when it does appear. A high citation rate with negative or inaccurate sentiment is a different problem than low coverage; it needs a different fix.

These map cleanly to a monthly review alongside existing marketing metrics. Assign one owner. Set a review cadence. The brands that treat AI visibility as a measured capability with clear ownership consistently outperform those that treat it as incidental. (Erlin data, 2026)

Frequently asked questions

Does ranking on page 1 of Google mean I'll appear in AI answers?

Not reliably. Google ranking and AI citation have a weak correlation. AI engines weigh entity clarity, content freshness, and third-party validation far more than keyword density or backlink volume. A brand can rank first on Google and not appear in ChatGPT's answer to the same question.

How many brands does AI typically recommend per query?

An average of 2.8. This creates a winner-take-most dynamic. If your brand is one of the 2–3 cited, you get the user's full attention on that query. If not, you're invisible regardless of how well you rank elsewhere.

Can a smaller brand compete with enterprise players in AI search?

Yes. Erlin's analysis shows smaller brands with strong entity context and structured data routinely outperform larger competitors in specific query categories. AI doesn't default to the biggest brand. It defaults to the clearest one. (Erlin data, 2026)

How long does it take to see results from AI visibility optimisation?

Structured data changes typically show impact in 14–21 days. Content updates take 30–45 days to affect citation rates meaningfully. A brand moving from AI Fragile to AI Present tier sees measurable citation improvement within 30–45 days of systematic optimisation. (Erlin data, 2026)

How do I know if AI is describing my brand incorrectly?

You won't, unless you're tracking it. Monitored brands detect AI errors in 14 days. Unmonitored brands take 67 days on average. (Erlin data, 2026) Set up prompt tracking that includes answer text review, not just whether your brand appeared, but what was said.

Is there a first-mover advantage in AI search?

Erlin's data shows first-movers gain a 3–5x citation advantage over brands that optimise later for the same queries. AI systems learn from their own outputs and engagement patterns, which reinforces early visibility. The gap between winners and laggards is already 9x and grows 3.2% every month. (Erlin data, 500+ brands, 2026)

Get your AI visibility score

Most brands have no idea where they stand in AI search. The only way to know is to measure it.

Share

Related Posts

Generative Engine Optimization Trends for 2026

7 GEO trends reshaping AI search visibility in 2026, with data on citations, structured data, and what actually drives brand coverage.

15 Generative Engine Optimization Best Practices Backed by Latest Research

Generative engine optimization best practices backed by 2026 research. 15 tactics to get your brand cited by ChatGPT, Perplexity, and Google AI Overviews.

AI for Content Planning & Strategy: A Complete Guide

AI for content planning in 2026 means planning for AI search visibility, not just faster drafts. Five-layer workflow, what to automate, what stays human.