Most brands know their Google ranking. Few know what ChatGPT says about them when a buyer asks a relevant question. That gap is where AI search monitoring comes in, and right now, most brands have no system for it.

Only 16% of brands systematically track AI search performance. (Erlin data, 2026) The other 84% are flying without instruments while AI platforms quietly shape buyer perception of their brand, their competitors, and their category.

This guide covers what AI search monitoring is, which platforms to track, the metrics that matter, and how to build a system that tells you what AI says about your brand, and what to do about it.

What Is AI Search Monitoring?

AI search monitoring is the practice of systematically tracking how your brand appears in responses generated by AI platforms, which prompts surface your brand, how accurately it is described, how often it appears relative to competitors, and whether those appearances drive action.

It is different from traditional SEO monitoring in one critical way. SEO monitoring tracks your position on a results page. AI search monitoring tracks whether you exist in the answer at all, and if so, what is being said.

When a buyer types "what's the best project management tool for remote teams" into Perplexity or ChatGPT, they get a generated answer that cites specific brands. The brands cited get the traffic. The brands not cited are invisible. AI search monitoring tells you which side of that line you are on, and why.

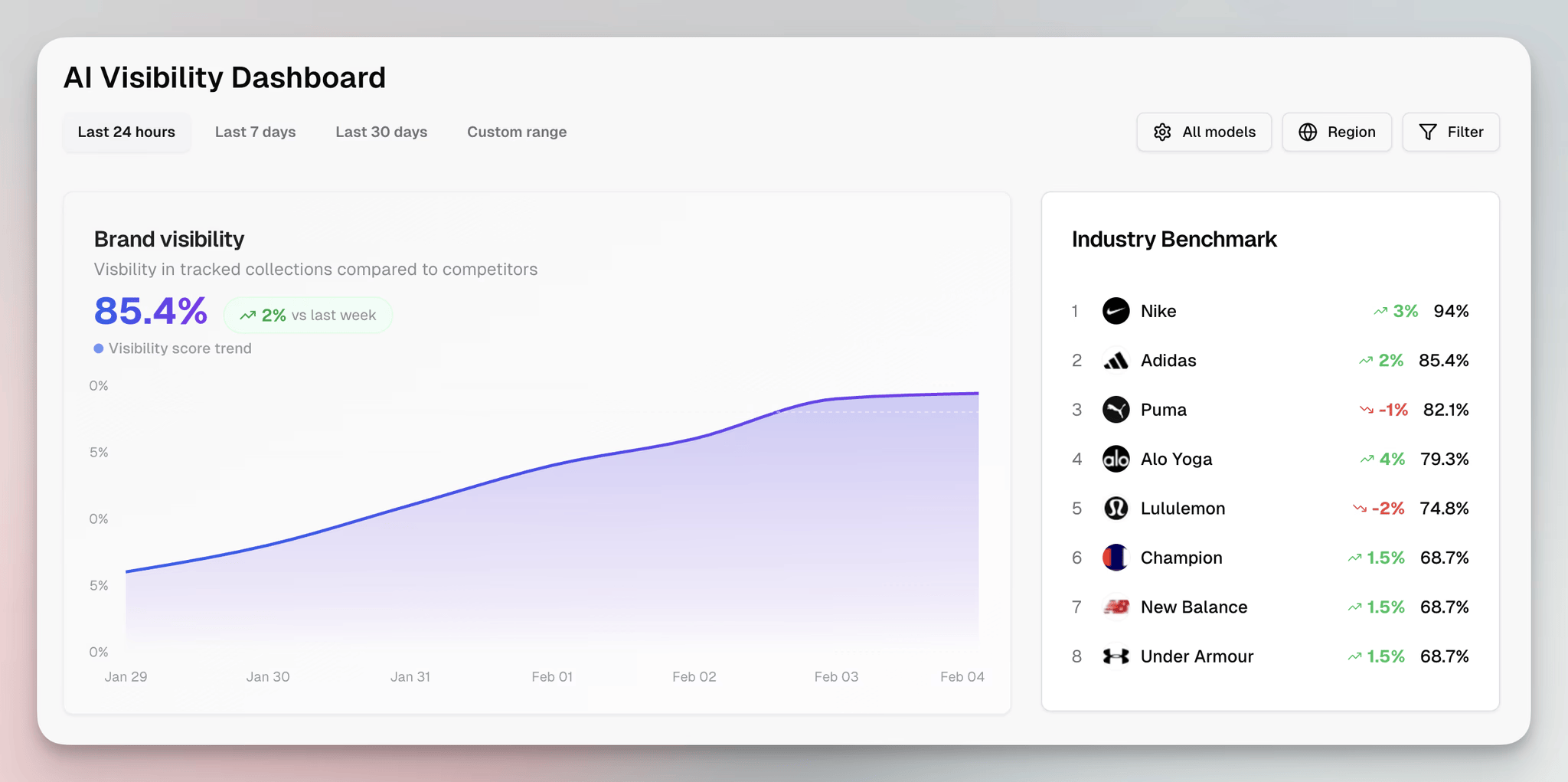

Erlin is built specifically for this. It tracks your brand's visibility across ChatGPT, Perplexity, Gemini, and Claude by running thousands of purchase-intent prompts and capturing exactly what each platform says.

You see your prompt coverage score, where competitors are being cited instead of you, and what specific content gaps are causing the misses. That combination of coverage, accuracy, and competitive context is what separates AI search monitoring from a manual spot-check.

Major AI Platforms to Track

Not all AI platforms behave the same way. Each has a different indexing approach, a different relationship with structured data, and a different user base. Monitoring one and ignoring the others gives you a partial picture.

ChatGPT and ChatGPT Search

ChatGPT is the highest-volume AI platform for consumer and B2B research queries. Its web-browsing mode fetches and parses live pages; its base model draws on training data.

Monitoring ChatGPT means tracking both whether your brand appears in generated answers and how it is described. ChatGPT is also where AI errors surface first: wrong pricing, outdated features, competitor misattributions.

Perplexity AI

Perplexity is the fastest-growing AI search engine for research-oriented queries. It crawls content continuously and cites sources directly in its answers, making it the most transparent platform for understanding why a brand is or is not being cited.

Perplexity is particularly active in SaaS and B2B purchase research. If buyers in your category are doing comparison research, a significant portion of it runs through Perplexity.

Google Gemini and AI Overviews

Google's AI Overviews appear at the top of search results pages for a growing share of queries.

For brands already invested in SEO, AI Overviews represent the highest-volume AI surface because it sits inside existing Google search behaviour.

Gemini's standalone product is a separate monitoring target. Both pull from Google's index but behave differently in terms of citation patterns.

Claude (Anthropic)

Claude is increasingly used for professional research and complex buyer queries. It draws heavily on third-party validation: Wikipedia, review platforms, and authoritative publications.

Brands without a Wikipedia presence or strong G2/Capterra profiles are underrepresented in Claude responses. Monitoring Claude specifically reveals gaps in third-party coverage that other platforms may not surface.

Microsoft Copilot

Copilot integrates into Microsoft 365 products and Bing search. For enterprise B2B brands, this is a significant surface. Copilot users are often mid-to-senior professionals doing vendor research inside the tools they already use.

Citation patterns in Copilot skew toward structured, authoritative content: product pages with schema, comparison tables, and verified review data.

Key Metrics in AI Visibility Monitoring

Tracking AI search visibility without a clear set of metrics produces noise. These are the core numbers that tell you whether your brand is winning or losing ground in AI search.

Prompt Coverage

The percentage of tracked prompts where your brand is cited in the AI response. This is your primary AI visibility metric. A brand with 50% prompt coverage appears in half of the relevant AI responses you are tracking.

The median across Erlin's 500+ brand dataset is 24% for e-commerce and 31% for SaaS. Top performers hit 67–78%. (Erlin data, 2026)

Citation Rate vs Mention Rate

Being cited and being mentioned are not the same thing. A citation means the AI platform presents your brand as an answer or recommendation.

A mention means your brand appears in a response but is not being recommended; it might even appear in a negative context or a comparison that favours a competitor. Track both, and track the ratio between them.

Share of Voice

Among all the brands cited for a given set of prompts, what percentage of citations go to your brand versus competitors? Share of voice tells you how dominant you are within your category.

A brand that appears in 60% of prompts but always appears third behind two competitors has a share of voice problem, not a coverage problem.

Accuracy Rate

How often does AI describe your brand correctly? Factual errors in AI responses, such as wrong pricing, deprecated features, and incorrect positioning, erode buyer trust before you ever get a chance to correct them.

Unmonitored brands take an average of 67 days to discover AI errors. Monitored brands catch them in 14 days. (Erlin data, 2026)

AI-Driven Traffic and Conversion Rate

The downstream metric that connects AI visibility to revenue. AI referral traffic converts at 3x the rate of traditional organic search. (Erlin client data, 2026)

Tracking which traffic comes from AI platforms, via UTM parameters, GA4 referral source analysis, or a dedicated monitoring tool, shows whether your AI visibility is producing commercial outcomes.

Coverage by Prompt Type

Not all prompts are equal. A brand might appear strongly for awareness-stage queries ("what is [category]") but be absent from purchase-stage queries ("best [category] tool for [use case]"). Breaking down coverage by prompt type reveals where in the buyer journey your AI visibility is strong or weak.

How to Monitor AI Search Visibility

Manual Monitoring Methods

Manual monitoring is the starting point for teams without a dedicated tool. It is time-consuming and does not scale, but it builds intuition for how AI platforms describe your brand and your category.

Run a prompt audit

Pick 20–30 prompts that represent how buyers search for your category. Include awareness queries ("what is [category]"), comparison queries ("best [category] for [use case]"), and purchase queries ("[specific product] vs [competitor]").

Run each prompt in ChatGPT, Perplexity, and Gemini. Record whether your brand appears, what position it appears in, and how it is described.

Document AI responses verbatim

Copy the AI response in full, including any sources cited. Highlight where your brand is mentioned versus cited. Note any factual errors. This creates a baseline you can return to in 30 and 60 days to measure change.

Track competitor citations

Run the same prompts and record which competitors appear when you do not. Competitor citation data tells you which brands own the prompts you are missing, and what content or attributes they have that you do not.

Set a manual review cadence

Monthly is the minimum for manual monitoring. High-traffic prompts churn at 23% month-over-month. (Erlin data, 2026) A prompt where you appear today may be lost to a competitor next month if you are not tracking.

Manual monitoring is useful for getting started and for deep-dive audits. For ongoing tracking across multiple platforms and hundreds of prompts, you need automated tooling.

Automated Tracking Tools

What to look for in an AI visibility tool

Not all tools are built for the same job. A tool that monitors brand mentions on social media is not the same as a tool that tracks AI citation rates across LLMs.

When evaluating AI visibility tools, prioritise: prompt coverage tracking across multiple platforms, citation versus mention differentiation, competitor benchmarking, accuracy monitoring for factual errors, and integration with your existing analytics stack.

Getting started with Erlin

Erlin is built specifically for AI search visibility monitoring. Here is how to go from zero to a working tracking setup.

Sign up and add your domain. During setup, select up to five competitors; these will be tracked alongside your brand across all prompts so you can see share of voice directly.

Erlin provides a library of high-intent prompt suggestions tailored to your category, so you are not starting from scratch building a prompt set.

Once your domain and competitors are configured, your snapshot is immediate. You see your AI Visibility Rank: your prompt coverage score across ChatGPT, Perplexity, Gemini, and Claude, your Traffic Rank, and a side-by-side competitor comparison showing where you appear together, where you appear alone, and where competitors are cited without you.

Connect GA4 and Google Search Console to unlock the traffic layer. After connecting, Erlin shows AI-driven traffic and non-AI traffic side by side, including the conversion rate difference between the two channels.

This is where the revenue case for AI visibility becomes concrete: you can see exactly how many sessions are coming from AI referrals and what those sessions convert at.

Under AI Visibility, then Prompts, you can view the exact AI-generated answers for each prompt Erlin tracks. This is where you check not just whether you appear, but whether you are being cited as a recommendation or mentioned in passing. Y

ou can read the full AI response, see the citation context, and flag factual errors directly from the dashboard.

Other tools to consider

Several platforms offer partial AI visibility functionality. Scrunch monitors brand mentions across AI platforms with a focus on sentiment. Peec.ai provides citation tracking with a particular focus on Perplexity and Google AI Overviews.

For teams that want to build their own tracking setup, running the Anthropic, OpenAI, and Gemini APIs against a custom prompt set and logging outputs to a spreadsheet is a functional, low-cost option. It requires engineering time but gives full control over prompt selection and response capture.

The limitation of manual API tracking is that it captures a point-in-time snapshot. AI platforms update their responses continuously. Consistent monitoring requires running prompts on a regular schedule and storing historical data, which is what purpose-built tools handle automatically.

How to Interpret Your AI Visibility Data

Data without interpretation produces a dashboard, not a strategy. Here is how to read your AI visibility metrics and connect them to action.

Low prompt coverage with high accuracy

Your brand appears in fewer prompts than it should, but when it does appear, the description is correct. This is a coverage problem, not an accuracy problem.

The fix is content: more structured content covering more prompts, better fact density on existing pages, and stronger third-party presence on the platforms AI relies on: Reddit, G2, and Wikipedia.

High coverage with low accuracy

Your brand appears frequently, but AI is describing it incorrectly. This is an accuracy problem. It usually means your structured data is incomplete or contradictory, your Organization schema is missing key attributes, or third-party sources are propagating outdated information.

Fix the schema first, then address the source content on review platforms.

Strong coverage on awareness prompts, weak on purchase prompts

AI platforms cite you when someone asks general category questions, but not when someone asks which specific tool to buy. This is a funnel-stage coverage gap. It means your brand has category presence but not purchase-intent presence.

The fix is content targeting purchase-stage queries: comparison tables, use-case-specific pages, and review-rich product pages.

Competitor dominating a specific prompt cluster

One competitor appears in every prompt related to a specific use case or buyer type, while you do not. This is a share-of-voice gap in a specific segment. Identify what content or attributes the competitor has that you lack.

In most cases, it is either a structured attribute you have not published (pricing, integration list, customer segment) or a third-party source that covers that use case explicitly (a case study, a Reddit thread, a category guide).

AI-driven traffic converting at a higher rate than organic

This is the signal that AI visibility investment is working. AI referral traffic arrives with higher purchase intent because the buyer has already received a recommendation from the AI platform before they click.

When this conversion rate difference is visible in your GA4 data, it is the business case for doubling down on prompt coverage.

Coverage loss month over month

If your prompt coverage drops between tracking periods, the most common causes are: a competitor publishing new content that displaces yours, an AI platform update that changes how it sources information for a prompt cluster, or your own content becoming stale.

Recovery time after losing a citation averages 45 days. (Erlin data, 2026) The faster you detect the loss, the faster you can address it.

Frequently Asked Questions

What is AI search monitoring?

AI search monitoring is the practice of tracking how your brand appears in AI-generated responses across platforms like ChatGPT, Perplexity, Gemini, and Claude. It measures whether your brand is cited, how accurately it is described, how often it appears relative to competitors, and whether AI-driven traffic is converting.

How often should I monitor AI search visibility?

Monthly is the minimum for most brands. High-traffic prompts change at 23% month-over-month, meaning prompt coverage that looks strong today can erode quickly without regular tracking. (Erlin data, 2026) Brands in competitive categories or those running active content programs should track weekly.

What is a good AI visibility score?

The median prompt coverage across 500+ brands is 24% for e-commerce and 31% for SaaS. AI Preferred brands achieve 60–80% coverage. AI-Dominant brands achieve 80%+. If your coverage is below 35%, your brand falls in the AI Fragile tier, appearing inconsistently and at risk of being displaced by competitors who are actively optimising. (Erlin data, 2026)

Can I monitor AI search visibility manually?

Yes, but it does not scale. Manual monitoring, like running prompts in ChatGPT, Perplexity, and Gemini and recording the outputs, gives you a useful baseline. For ongoing tracking across hundreds of prompts and multiple competitors, automated tooling is required. Manual checks take hours; Erlin's dashboard updates automatically.

What is the difference between an AI citation and an AI mention?

A citation means the AI platform recommends or presents your brand as an answer. A mention means your brand appears in the response but is not being recommended; it may appear in a comparison, a disclaimer, or a list where a competitor is the primary recommendation. Track both metrics separately. Citation rate is the one that drives traffic.

How does AI search monitoring connect to revenue?

AI referral traffic converts at 3x the rate of traditional organic search. (Erlin client data, 2026) Connecting your AI visibility tool to GA4 shows you the conversion rate difference directly. Brands that improve prompt coverage from the AI Fragile to the AI Present tier see measurable citation improvements within 30–45 days, and the revenue impact follows the traffic.

Build the System Before You Need It

The brands that win AI search in 2026 are not the ones who start monitoring after they lose ground. They are the ones who know their prompt coverage score today, track the prompts that matter to buyers, and fix gaps before competitors lock them in.

Start with a baseline. Run Erlin's free AI visibility audit to see your current coverage across ChatGPT, Perplexity, Gemini, and Claude, your AI Visibility Rank, your competitor comparison, and the specific gaps reducing your citations.

Share

Related Posts

The Complete AEO Audit Checklist for AI Search Visibility (2026)

The complete 2026 AEO audit checklist for AI search visibility. 9 sections, prioritized fixes, and benchmark data from 500+ brands tracked across ChatGPT, Perplexity, Gemini, and Claude.

11 ChatGPT Prompts for Product Descriptions That Convert

11 ChatGPT prompts for product descriptions built for both conversion and AI search citation. Frameworks, structured outputs, and AI visibility tactics in one guide.

ChatGPT Search SEO Explained: Strategies That Actually Work

ChatGPT drives 91% of AI search traffic. Learn the four signals that determine citation and the technical steps to get your brand included.